Operational vs Security Logging for PKI

The certificate management system produces a great deal of logging data. The team that runs it cares about issuance latency, queue depth, and renewal failures. The security operations centre cares about anomalous issuance, policy violations, and mis-issuance signals. The compliance function cares about audit trails and policy enforcement evidence. These three audiences need different data, on different cadences, with different alert thresholds. Most enterprises feed all three from the same dashboard and produce noise for all three.

Part of: Enterprise PKI Operating Model — the pillar page for the operations library.

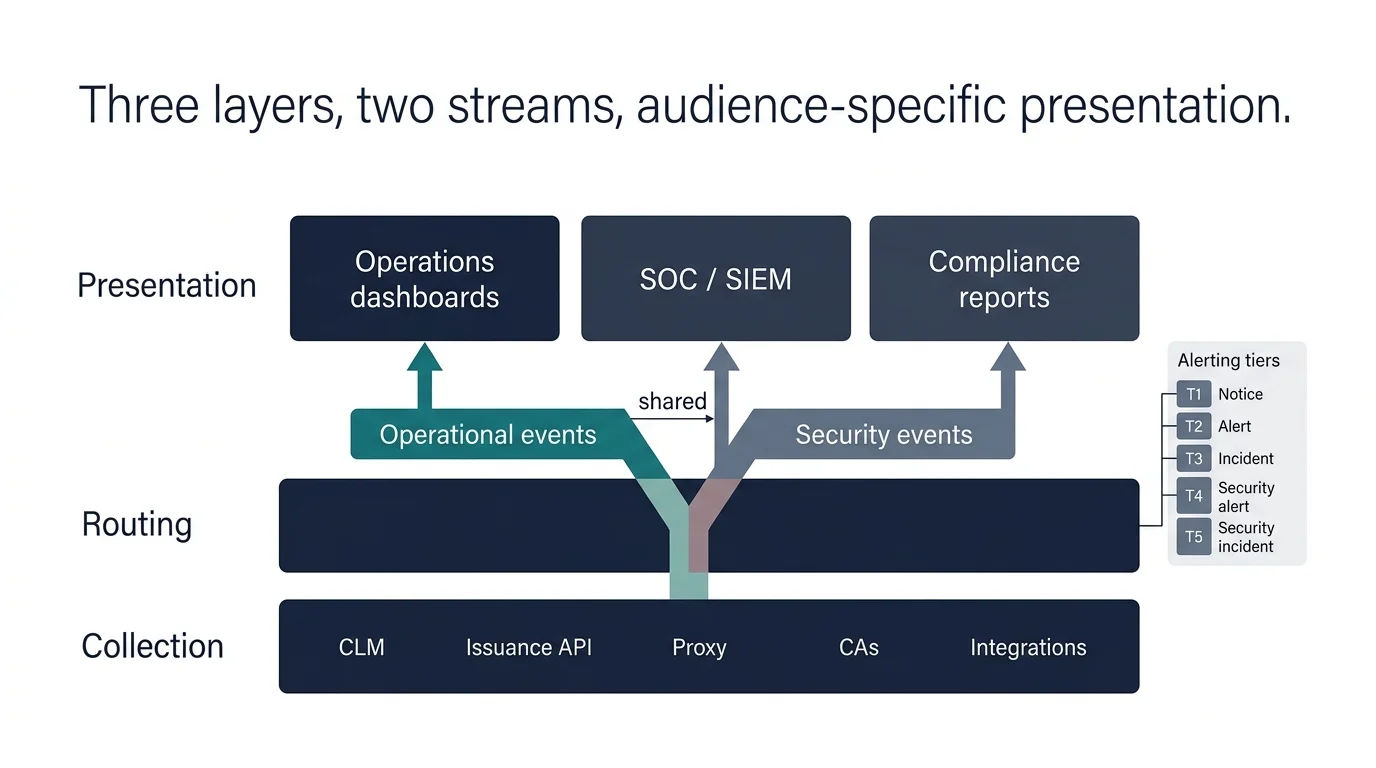

The mature operating model separates the data flows by audience. The operations team has its dashboards. The SOC has its detection rules. Compliance has its audit reports. The same underlying log data feeds all three, but the presentations and alerting are audience-specific.

PKI Health Radar

Drag the sliders to assess your current posture — scores update instantly.

The two data flows

The simplification that makes this domain tractable is recognising that PKI produces two fundamentally different types of log data, with two different consumers.

Operational logs. Generated by the PKI infrastructure itself: the CLM, the issuance APIs, the proxy layer, the integration points with platforms. These describe the system's own behaviour: how many certificates were issued, how long it took, what errors occurred, what was queued and what was processed. The audience is the team running the system. The cadence is high-frequency (events per minute or per second). The retention requirement is moderate (30–90 days for active operations, longer for trend analysis).

Security logs. Generated by the events that matter to security: issuance of certificates against policy rules, certificates issued for unexpected SANs, anomalous issuance patterns (sudden volume changes, off-hours issuance, unusual sources), revocation events, trust-store changes, CA-level events. The audience is the SOC and compliance. The cadence is lower-frequency (events per hour or per day, in most environments). The retention requirement is longer (1–7 years, depending on regulatory framework).

These flows have different schemas, different retention policies, different alerting thresholds, and different dashboards. The operating model designs them as separate flows with shared sources rather than as one flow with two views.

What operational logging covers

The operations team needs visibility into:

Issuance throughput and latency. How many certificates are being issued per unit time, and how long is each issuance taking. Latency anomalies often signal upstream problems (CA outage, network issue, policy validation slowdown) before they manifest as failures. This is the operational side of certificate issuance workflows.

Renewal pipeline health. What renewals are upcoming, in progress, completed, or failed. Renewals stuck in any state are leading indicators of incidents — the connective tissue with the renewal domain.

Discovery freshness. When was the last successful discovery scan against each estate segment. Stale discovery is a silent failure mode that the operations team has to monitor.

Integration health. The status of each integration to a downstream platform — last successful API call, error rates, authentication state. Broken integrations are often discovered only when a service team raises a ticket; proactive monitoring catches them earlier. This is the operational instrumentation behind platform onboarding.

Resource and capacity. The CLM's own resource consumption, queue depths, database performance. Capacity issues that build slowly (storage growth, query latency degradation) are easier to address before they become outages.

SLA-bearing metrics. If the operations team has SLAs to the rest of the organisation — issuance latency, renewal completion, ticket response — these are tracked here and reported on the operations team's cadence.

The operational dashboard is consulted continuously by the team running the service. The alerting on it is tuned to operational thresholds: an issuance failure is an alert; a single retry that succeeded is not.

What security logging covers

The SOC and compliance functions need visibility into:

Policy violations. Issuance attempts that violated the established policy — wrong validity, wrong key size, unauthorised SAN, unauthorised CA, off-policy template selection. These are detection signals, not just operational events. Each policy violation should produce a security-relevant log entry whether or not the issuance was completed.

Anomalous issuance patterns. Issuance events that match anomaly patterns: a sudden surge in issuance volume from a usually-low-volume source; issuance during hours that are unusual for the source; issuance for SANs that are unusual for the requestor; issuance involving CAs not normally used by that part of the organisation. These produce alerts that an analyst investigates.

Mis-issuance candidates. Certificates that were issued but match the profile of mis-issuance: certificates for domains the organisation does not own (CT-log monitoring), certificates for domains owned by the organisation but not requested by the central function (shadow IT), certificates from CAs not in the approved list. These are the signals that catch the bad cases that policy controls missed.

Revocation events. Every revocation, with reason code, requestor, affected certificate. Revocations are operationally significant; they are also security-significant when they reveal compromise responses.

Trust-store changes. Changes to the trust list — additions, removals, updates. Trust-store changes have a wide blast radius and a security significance that operational changes don't typically have.

CA-level events. Operations performed against the CAs themselves — administrative access, key operations, configuration changes. These are infrequent, high-stakes, and require detailed logging.

Authentication and authorisation events. Who accessed the PKI infrastructure, what actions they performed, what was successful, what was denied. Standard access logging applied to PKI specifically.

The security feed is consulted intermittently — for active incident investigation, for compliance reporting, for periodic threat hunting. The retention is longer; the alerting is tuned to security thresholds.

The data architecture

A clean data architecture for PKI logging has three layers:

Collection layer. Both operational and security events are collected from the same sources — the CLM, the proxy, the CAs, the integration endpoints. Collection is consistent; the same log entry might be relevant to both flows.

Routing layer. Collected events are routed to the appropriate downstream systems based on event type and audience. Operational events go to the operations observability stack (typically the same one used for general application monitoring — Datadog, Grafana Cloud, Splunk, ELK). Security events go to the SIEM or security data lake. Some events go to both, with appropriate transformation for each audience.

Presentation layer. Audience-specific presentations of the routed data. Operations dashboards for the operations team. Detection rules and SIEM queries for the SOC. Audit reports and compliance dashboards for compliance.

The separation is at the routing and presentation layers, not at the collection layer. This avoids duplicate collection (expensive and inconsistent) while enabling audience-appropriate presentation.

Routing details

⚠ Correlation gap analysis (7 events)

Events without correlation IDs cannot be linked across systems. Investigations require manual timestamp correlation. Fix critical-path events first (issuance, renewal, deployment, verification) — these are where the absence of correlation makes investigation hardest.

Certificate issued (HTTP / API)

Propagate W3C Trace Context headers (`traceparent`, `tracestate`) across all HTTP API calls. Most observability libraries (OpenTelemetry, Jaeger, Zipkin) emit these by default.

Issuance failed (HTTP / API)

Propagate W3C Trace Context headers (`traceparent`, `tracestate`) across all HTTP API calls. Most observability libraries (OpenTelemetry, Jaeger, Zipkin) emit these by default.

Discovery scan completed (Batch / scheduled)

Add a structured log field `correlation_id` to every log entry. Generate the ID at the start of the batch run; pass it through every sub-call as a parameter so child events inherit it.

Integration error (HTTP / API)

Propagate W3C Trace Context headers (`traceparent`, `tracestate`) across all HTTP API calls. Most observability libraries (OpenTelemetry, Jaeger, Zipkin) emit these by default.

Policy violation on issuance (HTTP / API)

Propagate W3C Trace Context headers (`traceparent`, `tracestate`) across all HTTP API calls. Most observability libraries (OpenTelemetry, Jaeger, Zipkin) emit these by default.

Anomalous issuance pattern (HTTP / API)

Propagate W3C Trace Context headers (`traceparent`, `tracestate`) across all HTTP API calls. Most observability libraries (OpenTelemetry, Jaeger, Zipkin) emit these by default.

Trust-store change (Batch / scheduled)

Add a structured log field `correlation_id` to every log entry. Generate the ID at the start of the batch run; pass it through every sub-call as a parameter so child events inherit it.

Alert design

Alert design is where most PKI monitoring fails. Two failure patterns are pervasive:

Alert on every event. Every issuance generates an alert. The team is overwhelmed; alerts are ignored; real signals are missed. This is the configuration that ships out of the box from many platforms; it has to be reduced before the alerting becomes useful.

Alert only on terminal failures. Alerts fire only when something has visibly broken. Earlier signals — increasing latency, retry rates ticking up, discovery scan duration extending — are not surfaced. By the time the alert fires, the incident has already started.

The mature pattern: tiered alerting on leading indicators.

Tier 1 — Operational notice. A notification, not an alert, sent to a non-paging channel. Examples: queue depth above normal but below threshold, retry rate above baseline, discovery scan completion delayed but successful. The team sees these in passing during normal work; no-one is paged.

Tier 2 — Operational alert. An alert that requires human attention but not necessarily immediate response. Examples: a renewal that has failed once and is being retried, a discovery scan that has not completed, an integration error rate exceeding threshold. Paged to the on-call engineer if outside business hours; surfaced in the team channel during business hours.

Tier 3 — Operational incident. An alert that requires immediate response. Examples: issuance failure for a high-priority certificate, renewal failure approaching expiry, integration outage affecting production. Paged to on-call regardless of time; incident response process activated.

Tier 4 — Security alert. A security-relevant event requiring SOC attention. Examples: issuance against policy, anomalous issuance pattern, certificate from unauthorised CA, trust-store change without change ticket. Routed to the SOC; analyst investigates per their workflow.

Tier 5 — Security incident. A confirmed security event requiring incident response. Examples: confirmed mis-issuance, key compromise indicator, unauthorised CA operation. Activates the security incident response process.

The thresholds for each tier are parameterised against the team's capacity and the estate's operational profile. A team that can absorb 20 tier-2 alerts a week tunes the threshold to that capacity; a smaller team tunes for fewer.

Top 3 demotion candidates

These categories produce the most noise relative to action. Demoting to T1 (notice) removes them from the alert count while keeping events visible on the operations channel. Click "Demote to notice" to see the effect on capacity in real time.

All categories (6)

Where logging breaks

One dashboard, two audiences. The operations team and the SOC share a dashboard. The operations team sees too many security events; the SOC sees too much operational noise. Both audiences mentally filter; both miss things. The fix is audience-specific presentation.

Logs as compliance theatre. Logs are collected because compliance requires it. Nothing reads them. Storage costs grow. The first time an audit asks for a specific event, it takes days to retrieve. The fix is treating logs as operational instruments, not just compliance artefacts.

Alert fatigue from poor classification. Every event raises an alert. Eventually the team mutes the channel. Real incidents are missed. The fix is the tiered alerting model — most events are notices, fewer are alerts, fewest are incidents.

Retention misaligned with use. Operational logs retained for 7 years (because compliance asked); security logs retained for 30 days (because someone forgot to extend the retention). The first is expensive without value; the second is missing audit data when needed. The fix is retention policies set by audience and event type, not as a single estate-wide policy.

Logs without correlation IDs. A certificate was issued, then deployed, then verified. Three log entries from three systems, with no way to link them. Investigating a specific certificate's lifecycle requires manual correlation across systems. The fix is propagating a correlation ID through the workflow so that all events for a single certificate event chain are linkable. The propagation pattern is W3C Trace Context for HTTP-based events, structured log fields for batch processes, and message metadata for queue-based events — applied consistently across every event source.

Maturity progression for PKI logging

The five-level PKI operational maturity model introduced in the pillar maps onto the logging and monitoring domain as follows.

Level 1 — Ad-hoc. Logs are produced by the CLM and integrations but no consistent collection or routing exists. The operations team checks logs reactively — usually after an incident has been reported through other channels. There is no audience separation; whoever asks gets the raw logs and is left to filter them. Alert configuration is whatever shipped with the product; it is either off or noisy enough to be muted.

Level 2 — Tooled. Logs are collected to a central observability platform (Splunk, Datadog, Grafana, ELK). Some dashboards exist. The operations team and the SOC look at the same dashboards and complain about the noise. Alerting fires but the team is ambivalent about which alerts matter. Retention is configured but not by audience — typically a single retention policy set by whoever asked first.

Level 3 — Operationalised. The two data flows are explicitly separated at the routing layer. Operational events go to the operations observability stack; security events go to the SIEM. The tiered alerting model (T1–T5) is in place with documented thresholds. Each audience has its own dashboards. The operations team and the SOC are no longer fighting over the same screen.

Level 4 — Integrated. Three structural elements operate together: correlation IDs propagated end-to-end so that every certificate event chain is queryable as a single trace; noise-to-signal ratio actively monitored per alert category and tuned quarterly; retention policies set by audience and event type rather than as a single estate-wide policy. Logs are operational instruments, not compliance artefacts.

Level 5 — Intelligent. Anomaly detection runs against the log streams to surface patterns the explicit alerting rules miss. Alert tuning is data-driven — categories with low actionability are demoted automatically; categories that produce action are promoted. Security signals from PKI feed broader detection — certificate-issuance anomalies become inputs to the SOC's threat-hunting workflow rather than isolated alerts.

Most enterprises sit between levels 1 and 2 because logging is technically present but not architecturally separated by audience. The progression to level 3 is achievable within a quarter once the routing layer is built — but most organisations defer it because it looks like duplicating work. The transition from L2 to L3 is harder than L1 to L2 precisely because it requires deliberate audience separation rather than just collecting more data.

Further reading within this cluster

- Enterprise PKI operating model — the pillar page

- Certificate governance and the steering function

- Certificate discovery in practice

- Certificate issuance workflows

- Certificate renewal operations

- Certificate revocation operations

- Platform onboarding for certificate automation

- Trust-store management

- PKI incident management

- Change management for PKI operations