Certificate Renewal Operations

Renewal is the operational function that ensures a live certificate is replaced before it expires, without service disruption. It looks similar to issuance — both produce a new certificate — but the operational characteristics are fundamentally different. Issuance creates a new asset; renewal replaces a working one. The mature operating model treats them as separate domains.

Part of: Enterprise PKI Operating Model — the pillar page for the operations library.

Most certificate-driven incidents are renewal incidents. The certificate worked yesterday, expired today, and the service stopped working. This is rarely a tooling failure. It is almost always a renewal-process failure: the renewal didn't happen, didn't happen in time, or didn't deploy successfully.

Most CLM vendors sell renewal as “set and forget”. It is the single largest source of certificate-driven outages in production estates. The discrepancy between marketing and operational reality is not subtle — it is the gap between selling automation as a capability and operating it as a discipline.

PKI Health Radar

Drag the sliders to assess your current posture — scores update instantly.

Why renewal is structurally harder than issuance

Three properties make renewal operationally distinct from issuance:

Renewal is time-pressured by definition. Every renewal has a deadline — the expiry of the existing certificate. Issuance has no inherent deadline; if it fails, the service team retries. Renewal failure has a hard deadline beyond which the service breaks. This changes the failure-handling profile entirely.

Renewal must succeed on the first attempt that crosses the deadline. Issuance can fail repeatedly without consequence — the team retries, the certificate eventually issues, the service goes live. Renewal that fails after the previous certificate has expired has produced a service outage. The retry budget is fixed by the renewal-buffer parameter; once it is exhausted, the outage has begun.

Renewal touches a service that is in production. Issuance is for a service being stood up. Renewal is for a service that is currently serving customers. The deployment of the renewed certificate is a change to a live service, with all the change-management implications that follow. Renewal that requires service restart, configuration reload, or load-balancer reconfiguration is not a self-contained operation — it is a change event.

These three properties dictate the structure of renewal operations.

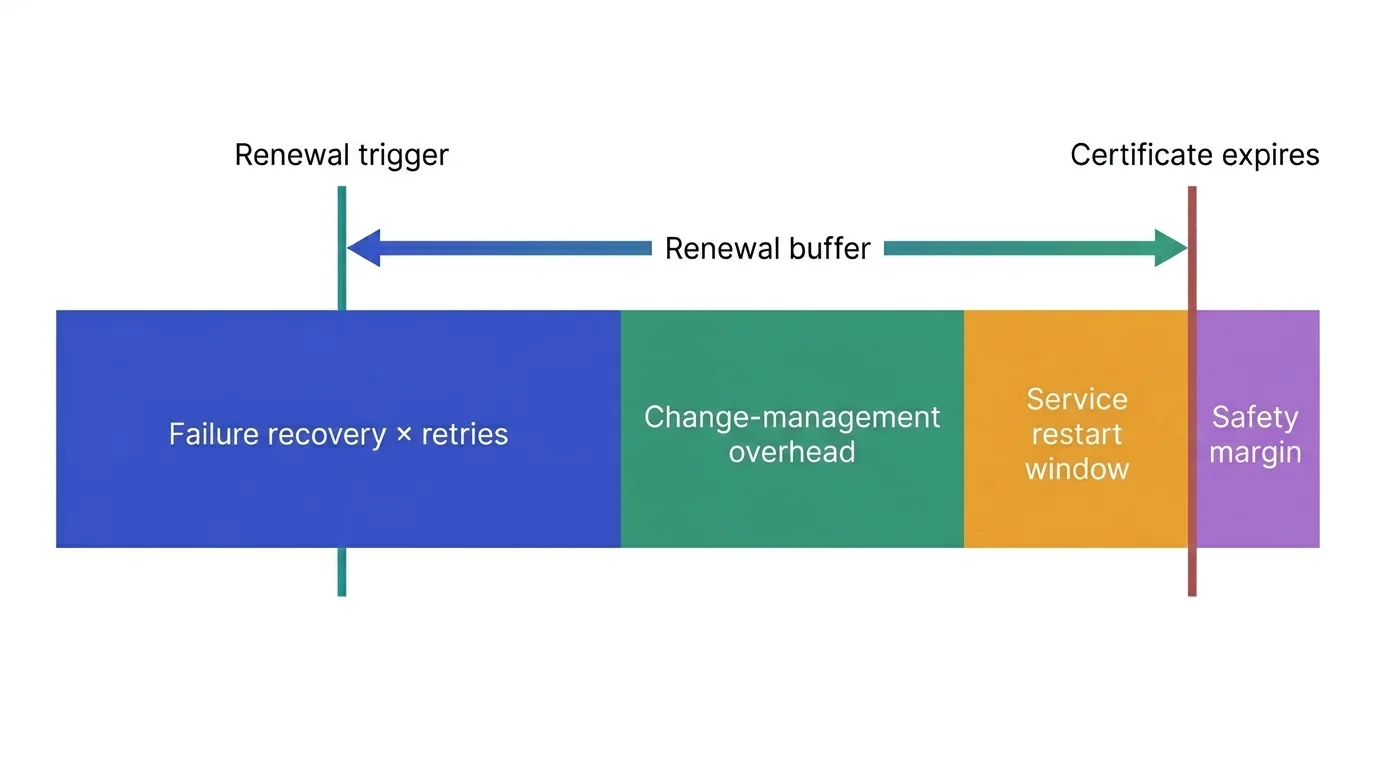

The renewal buffer — the parameter that decides everything

The renewal buffer is the time between when renewal begins and when the existing certificate expires. Sized correctly, it absorbs renewal failures, allows retries, and provides change-management runway. Sized incorrectly, it produces incidents.

The right buffer is determined by five parameters specific to your operations:

Validity period of the certificate. Shorter validity (90 days for typical Let's Encrypt, 47 days under the new CA/Browser Forum Ballot SC-081v3 baseline) requires shorter buffers in absolute terms but more frequent renewal events. Longer validity (1 year, 397 days) tolerates longer buffers because the renewal event is less frequent.

Deployment automation level. Fully automated deployment (cert-manager, ACME with auto-deploy hooks, infrastructure-as-code with rolling deployment) needs short buffers — minutes to hours for retries. Manual deployment (someone has to log in and replace the certificate file) needs days, sometimes weeks, to allow for the human step.

Service restart requirement. Whether the service must restart to pick up a new certificate, or whether it can hot-swap. Hot-swap services (modern HTTP servers with reload signals, services that re-read certificates per connection, sidecar-managed certificates) deploy renewals invisibly. Restart-required services (older Apache configurations, IIS, F5 hardware, mainframe applications, anything that holds the certificate in process-loaded state) need a coordinated restart, which adds change-management time even when the cert deployment itself is automated. This is the dominant hidden constraint for many traditional platforms.

Failure recovery time. How long does it take to detect a renewal failure, diagnose the cause, and complete a successful renewal? Failure recovery time is the dominant factor in buffer sizing for most operations. A team that can detect, diagnose, and remediate in 4 hours can run with a 24-hour buffer; a team that requires 3 days can't.

Change-management overhead. Some services cannot deploy a renewed certificate without a change ticket. Some require maintenance windows. Some require customer notification. Each adds to the buffer requirement.

The general formula:

renewal_buffer = (max_failure_recovery × retry_count) + change_management_overhead + service_restart_window + safety_margin

Most enterprises run with too small a buffer because they sized it against the happy path. The right buffer accommodates the worst plausible failure recovery scenario, not the average.

For automated deployment with hot-swap and strong monitoring: typically 7–14 days for 90-day certificates, 30–45 days for 1-year certificates.

For automated deployment with required restart: typically 14–21 days for 90-day certificates, 45–60 days for 1-year certificates.

For manual deployment: typically 30 days minimum for 90-day certificates, 60–90 days for 1-year certificates.

For services without dedicated certificate ownership: longer than the operations team would prefer, because the renewal process includes finding the owner.

Buffer breakdown

Dominant constraint: service restart

The service restart window is dominating your renewal buffer. The biggest investment opportunity is graceful-reload or hot-swap capability so the renewed certificate can be deployed without restart. For services that genuinely cannot reload, the next-best investment is a documented maintenance-window schedule that pre-allocates restart slots, eliminating the per-renewal scheduling latency.

The renewal workflow

A complete renewal workflow has six stages, each with its own failure modes:

Trigger. Renewal is initiated based on the buffer threshold. This is automated against the certificate expiry date in the CLM. The trigger has to be reliable — a missed trigger is a missed renewal.

Pre-flight checks. Before issuing the renewal, verify that the current configuration is still valid: the SAN list is current, the requesting service is still authorised, the CA is still operational, the policy hasn't changed in ways that affect this certificate. Pre-flight catches issues before the issuance step where they are easier to remediate.

Issuance. The renewed certificate is issued, by the same issuance workflow as initial issuance (combined or admin-mediated). The issuance produces a certificate ready for deployment but not yet deployed.

Deployment. The renewed certificate is deployed to the service. This is the high-risk step because it touches a live system. Deployment patterns vary: hot-swap (the service picks up the new certificate without restart), graceful-restart (the service is restarted with no service disruption to active sessions), maintenance-window (the service is briefly unavailable). The right pattern depends on the service architecture.

Verification. After deployment, verify the new certificate is in use and the service is healthy. The verification has to be active, not assumed — checking that the new certificate is presented on the network endpoint, checking that the service is responding correctly, checking that monitoring shows green. A renewal that issued correctly but failed to deploy looks identical to a successful renewal until the old certificate expires.

Old-certificate handling. The old certificate is now superseded. Depending on the certificate type, it may need to be revoked (rare for renewals — only revoke if the old key is compromised), removed from trust stores, or simply allowed to expire naturally. Documentation is updated.

The mature pattern is that all six stages are automated end-to-end for the certificate types that support combined workflow, with manual approval gates only at points where governance requires them. The immature pattern is that issuance is automated but the team manages deployment and verification manually — which works until volume scales.

The renewal observability problem

You cannot operate renewal you cannot see. Three observability requirements:

Forward visibility. What certificates are due for renewal, when, and through which workflow? The forward queue is the planning tool — it tells the operations team what work is upcoming and lets them spot anomalies (a sudden cluster of renewals on the same day, certificates due for renewal that have no clear owner, certificates whose renewal will require coordination across teams).

Real-time status. For renewals in progress, where are they in the workflow? Triggered, issued, deployed, verified, complete? The real-time status enables intervention when a renewal stalls.

Historical analysis. Across the renewal events of the last quarter, what failed, what was retried, what was deployed late, what required manual intervention? Historical analysis is what tells the operations team whether the renewal buffer is correctly sized for their actual failure profile, or whether they have been fortunate.

Most CLMs provide some version of forward visibility. Many provide real-time status for the renewals they manage. Few provide historical analysis that supports buffer-sizing decisions. The operations function builds this layer, typically in their own observability stack.

Where renewal breaks

Renewal triggers that fire but produce no action. The CLM logged a renewal trigger 14 days before expiry. No certificate was issued. No alert was raised. The trigger was logged but the downstream automation failed silently. This is the most common renewal failure mode and the hardest to detect — there is no error, only the absence of an expected event. The fix is to alert on the absence of expected events, not just on errors — if a trigger fires and no issuance follows within the expected window, that is itself an incident. See also incident management.

Successful issuance, failed deployment. The renewed certificate was issued and stored in the CLM. It was never installed on the service. The next time the service team checks, the old certificate has expired and the service is down. The fix is to instrument the deployment step end-to-end — issuance is not deployment, and the renewal workflow is not complete until the new certificate is verified live on the service.

Verification that confirms the wrong thing. The verification step checks that the certificate is valid, but doesn't check that it is the renewed certificate. The old certificate, still in place, passes the validity check until it expires. The fix is verification that confirms the certificate fingerprint matches the renewed one, not just that some valid certificate is present.

Sudden renewal storms. A large number of certificates were issued at the same time historically (during a migration, a platform stand-up, a rebuild) and now all renew on the same day. The renewal queue exceeds operational capacity. The fix is forward visibility on the renewal calendar to detect storms in advance, plus deliberate staggering of issuance dates during future bulk events to prevent the next storm.

Service ownership turnover. The certificate has been renewing for years. The service team has changed three times. The current team has no record that the certificate exists, no understanding of what it does, and no automation to renew it because the previous team's automation was decommissioned. This is a discovery problem manifesting as a renewal problem. The fix is to bind certificate ownership to the service record in the CMDB so ownership transitions automatically when service ownership changes.

Storm detected — resolvable by stagger.

Recommended stagger window: 14 days starting Thu, 18 Jun 2026.

Daily stagger distribution (14 days)

Output artefacts

Schedules each batch via cron. Customise the renewal command path.

# Renewal stagger plan generated by Axelspire Renewal Storm Detector # Original storm: 80 certificates expiring within 2 day(s) starting 2026-06-30 # Staggered renewal: 80 renewals across 14 day(s) starting 2026-06-18 # Customise the renewal command path below to match your tooling. # Format: minute hour day-of-month month day-of-week command # Thu, 18 Jun 2026 — 6 renewals ...

Daily-running playbook that triggers the matching batch.

# Renewal stagger plan generated by Axelspire Renewal Storm Detector # Original storm: 80 certificates expiring within 2 day(s) starting 2026-06-30 # Staggered renewal: 80 renewals across 14 day(s) starting 2026-06-18 # # Customise renewal_command below to match your actual cert renewal tooling. # This playbook is intended to run daily via cron or scheduled job. It detects # whether today is in the renewal schedule and runs the corresponding batch. --- - name: Staggered certificate renewal ...

Maturity progression for renewal

The five-level PKI operational maturity model introduced in the pillar maps onto the renewal domain as follows.

Level 1 — Ad-hoc. Certificate renewals happen reactively. The team finds out a certificate is expiring when it does, or close to it. There is no central tracking. Renewal is calendar-driven for the certificates anyone remembers, ad-hoc for everything else. Certificate-driven incidents are routine and treated as individual events rather than systemic ones.

Level 2 — Tooled. A CLM tracks certificate expiry dates and generates renewal triggers. Some renewals are automated (typically public TLS via ACME); most still require manual intervention. The team works through the upcoming-renewal queue but the queue exceeds capacity at peak times. Sudden incidents still happen — usually for certificates the CLM didn't know about, or where the automated renewal failed silently.

Level 3 — Operationalised. Renewal is a defined operational function with a documented six-stage workflow. The renewal buffer is sized correctly for the team's capacity and the deployment characteristics, including service-restart constraints. Verification is end-to-end, not just at issuance. Renewal incidents are categorised in the post-incident process. The renewal queue is visible forward and historically.

Level 4 — Integrated. Renewal feeds the change-management calendar, capacity-planning forecasts, and the broader service operations dashboard. Renewal storms are detected weeks in advance and staggered automatically. The team is sized for steady state, not peak storm; storms have been engineered out by deliberate stagger of issuance dates.

Level 5 — Intelligent. Renewal patterns produce operational intelligence. Anomalies in the renewal stream trigger investigation before they cause incidents. The renewal function is increasingly invisible because it operates correctly with high reliability — operations time has shifted to forward planning and continuous improvement rather than active firefighting.

Most enterprises sit between levels 1 and 2 on renewal. Progression to level 3 is achievable within six to nine months once renewal is recognised as a discrete operational domain rather than a feature of the CLM.