Certificate Incident Management

A certificate incident is what happens when a certificate-related failure causes a service disruption. The most common form is the certificate-expiry incident: a certificate expired, the service that depended on it stopped working, the team scrambled. There are other forms — revocation propagation issues, trust-store mismatches, CA outages, mis-issuance events — but the expiry incident is by far the most frequent and the most preventable.

Part of: Enterprise PKI Operating Model — the pillar page for the operations library.

The reason it remains common despite the existence of mature CLM tooling is operational, not technical. The tools exist; the operating model has not been built around them. Certificate incidents fall into the gap between certificate operations and general service operations, and that gap has its own characteristic failure modes.

PKI Health Radar

Drag the sliders to assess your current posture — scores update instantly.

Why certificate incidents are operationally distinct

The cause profile is specific. Generic service incidents have a wide variety of causes: code defects, infrastructure failures, configuration errors, capacity issues, dependency failures, security events. Certificate incidents have a narrow cause profile: expired certificate, revoked certificate, untrusted certificate, mismatched certificate, CA outage. The narrowness allows category-specific runbooks that wouldn't be productive for general incidents.

The remediation is workflow-specific. Restoring a service from a certificate incident requires understanding the certificate lifecycle: where to issue a replacement, how to deploy it, how to verify, how to handle the original. The standard service-incident remediation playbook (restart, rollback, escalate) doesn't apply directly. Certificate operations expertise is needed.

The RACI is split between teams. The service that broke is owned by one team. The certificate that caused the breakage is owned (or should be owned) by another. The incident commander has to coordinate across both. In the common case where neither team has been clearly assigned ownership of the certificate, incident commanders default to whichever team is on the call first — which is rarely the right answer.

The detection signal is often weak. A service that stopped responding because of an expired certificate produces the same symptom as many other failures (the service is down, errors in logs, alerts firing). The detection of "certificate-expiry incident" specifically often comes from a human looking at the timing — "wait, when did that certificate expire?" — rather than from automated detection. This delays remediation.

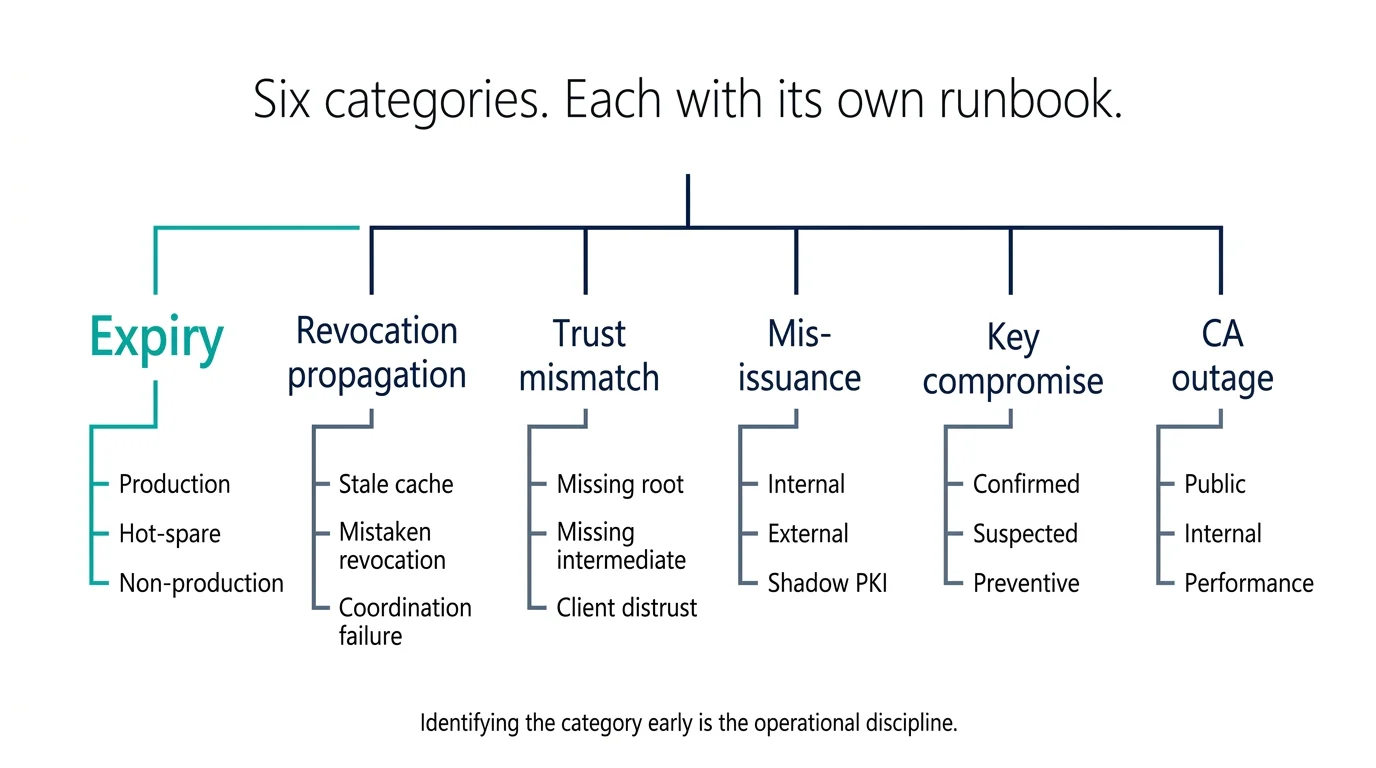

The certificate incident categories

A useful incident management approach treats certificate incidents as a category with subcategories, each with its own response pattern.

1. Expiry incident. A certificate expired and the service depending on it failed. Subcategories: expired in production (active outage), expired but service is hot-spare (degraded redundancy, not yet user-impacting), expired in non-production (environment broken, no customer impact).

2. Revocation propagation incident. A certificate was revoked, but downstream verifiers are still accepting it; or a certificate was revoked unintentionally and services that depend on it are failing. Subcategories: stale revocation cache, mistaken revocation, revocation-after-replacement coordination failure.

3. Trust-mismatch incident. A service is presenting a valid certificate but a client is not trusting it. The trust store is missing the issuing CA. Subcategories: missing root, missing intermediate (chain not sent), trust-store update incomplete, client-specific trust restriction (browser distrust, OS distrust).

4. Mis-issuance incident. A certificate was issued in violation of policy or for an entity that should not have received it. Subcategories: internal mis-issuance (policy violation), external mis-issuance (CA error), shadow PKI (certificate issued by an unauthorised internal CA).

5. Key compromise incident. The private key has been exposed. Subcategories: confirmed compromise (key recovered by attacker), suspected compromise (indicators present, exploitation not confirmed), preventive rotation (no compromise but key being rotated as a precaution).

6. CA outage incident. The CA itself is unavailable. Subcategories: public CA outage (rare but real — Let's Encrypt has had brief outages, public CAs have had longer ones), internal CA outage (issuance pipeline broken), CA performance degradation (issuing but slowly).

Each subcategory has a specific runbook. The incident commander identifies the category early, selects the runbook, and follows the documented response pattern.

Reasoning

- Full customer impact on a critical service — T1 by default.

The expiry-incident runbook

This is the runbook the operations team uses most often. Six steps:

1. Confirm the cause. The service is down; logs show TLS handshake failure or "certificate expired" errors. Check the certificate currently being presented by the affected service. Confirm it has indeed expired and that this is the cause of the outage rather than a coincidental failure.

2. Identify ownership. Who owns the affected service? Who owns the expired certificate? Are they the same team? In many incidents, the service owner does not know the certificate exists. The incident commander has to bridge ownership during the response.

3. Determine replacement path. Is there an automated renewal path (combined workflow) that simply needs to be triggered? Is there an admin-mediated path that needs to be expedited? Is the certificate something that requires manual intervention because the original integration was bespoke? The answer determines the replacement timeline. This depends directly on the maturity of the underlying issuance workflows and renewal operations.

4. Issue the replacement. Through the appropriate channel, issue a replacement certificate. For combined workflow, this may take seconds; for admin-mediated workflow, minutes to hours; for bespoke integrations, longer. The incident commander tracks elapsed time against the service's customer-impact window.

5. Deploy and verify. Deploy the replacement to the affected service. Verify the service is responding correctly with the new certificate. The verification has to confirm the new certificate is in use and the service is healthy — not just that some certificate is presented.

6. Post-incident review. Why did this expiry happen? Three causes are dominant: (a) renewal automation failed silently and no-one was alerted; (b) renewal happened but deployment didn't, and verification didn't catch it; (c) the certificate had no automation at all and was being managed by tribal knowledge. The post-incident review identifies which cause applies and triggers operational improvements.

What a good post-incident review identifies

Post-incident reviews for certificate incidents are particularly productive because the cause space is narrow. A useful review identifies:

Why the expiry was reached. Either the renewal didn't happen (process or tooling failure) or it happened and the deployment failed (verification failure). Both have specific operational fixes.

Why detection was late. The certificate expired, and time elapsed before anyone noticed. Why? Was the monitoring inadequate, was the alerting tuned wrong, did the alert fire and get missed, was there no alert configured? Each has a different fix — and each is the responsibility of PKI logging and monitoring.

Why the response took the time it took. The replacement issuance took N minutes; the deployment took M minutes; the verification took L minutes. Where was the time spent? Often the time is dominated by ownership confusion (figuring out who can authorise the change) rather than the technical work itself.

What pattern this represents. Was this a one-off, or is it likely to recur for other certificates with similar profile? If similar — same CA, same workflow, same team, same automation gap — then the fix needs to apply across the pattern, not just to this certificate.

What changes the operating model. Sometimes an incident reveals that the operating model itself has a gap — a category of certificate that no-one was managing, a workflow that was never defined, a handoff between teams that doesn't work. These changes are larger than the immediate incident response and need to be tracked.

The revocation-propagation incident pattern

Less common than expiry but operationally tricky:

A certificate was revoked. Some clients have noticed (CRL or OCSP cache is current); some haven't (cache is stale). The certificate is rejected in some places and accepted in others. The service that owns the certificate doesn't know which is which. Customer reports come in inconsistently.

The runbook here:

1. Confirm the revocation status. Check the CA's revocation infrastructure to confirm the revocation is in fact recorded. Check OCSP and CRL endpoints to confirm propagation is happening as expected.

2. Map the verifier population. Which clients are checking revocation, and how often do they refresh their cached state? This determines how long propagation will take to complete. Browsers vary; non-browser clients vary more.

3. Decide on coordinated replacement. If the revocation was correct but the propagation lag is causing user-visible issues, consider coordinated replacement: deploy a new certificate immediately and let the affected clients pick up the new one rather than waiting for revocation cache refresh. This trades cache-coherence wait time for replacement deployment time.

4. Communicate to affected stakeholders. Internal teams and external partners (where relevant) need to understand what is happening, what they should expect to see, and what they should do (typically: clear caches, refresh sessions, retry transactions).

Known similar incidents in this category

These are recurring patterns. Your incident may match one — in which case the resolution path is well-understood.

1. Renewal automation fired but downstream automation failed silently

2. Certificate had no automation — managed by tribal knowledge

3. Renewal happened but verification confirmed the wrong thing

Where incident management breaks

No category recognition. Incidents are treated as generic service incidents. The certificate-specific cause is identified late. The remediation pattern is generic and inefficient. The fix is to train the incident response team to recognise certificate incident categories early and route to the specialist runbook.

Runbooks that don't survive contact with reality. The runbook references tools the team doesn't currently use, contacts who have left, or processes that have changed. The first time the runbook is exercised in anger, the incident response is slowed by runbook navigation. The fix is annual tabletop exercises that catch runbook drift.

Ownership confusion as the dominant time consumer. The incident response is delayed by figuring out who owns what. Service team and certificate operations team negotiate during the incident, while the customer impact accumulates. The fix is pre-incident — assign ownership clearly and document it where the incident response team will find it (in the CMDB, in the service catalogue, in the runbook).

Post-incident reviews without operational follow-through. The review identified the operational improvements; nothing was funded or scheduled to implement them. The next similar incident happens. The fix is treating PIR action items as engineering work with the same tracking and prioritisation as feature work.

Incidents not feeding back into the operating model. Incidents reveal gaps in the operating model — process gaps, ownership gaps, workflow gaps. If incidents are treated as one-offs, the operating model never improves. The fix is systematic: aggregate incident causes quarterly, identify the dominant operational gaps, and make operational improvements against the dominant ones.

Maturity progression for certificate incident management

The five-level PKI operational maturity model introduced in the pillar maps onto the incident management domain as follows.

Level 1 — Ad-hoc. Certificate incidents are handled as generic service incidents. The certificate-specific cause is often identified late, sometimes only during the post-incident review. There are no certificate-specific runbooks. Each incident is improvised and the team's collective knowledge is whatever individuals happen to remember from previous incidents.

Level 2 — Tooled. A few generic certificate runbooks exist — typically a "certificate expired, here's what to do" document of varying quality. The runbooks are referenced during incidents but they don't reliably help because they were written for a previous version of the estate. Post-incident reviews happen but their action items often don't get implemented.

Level 3 — Operationalised. The six incident categories are recognised. Category-specific runbooks exist for the dominant subcategories (expiry, revocation propagation, trust mismatch). Incident commanders identify the category early and route to the specialist runbook. Post-incident reviews are systematic and the review questions are documented.

Level 4 — Integrated. Two structural elements operate together: category-specific runbooks for every subcategory in the taxonomy, and a quarterly tabletop exercise schedule that rotates through the categories so each runbook is exercised at least annually. Tabletop exercises are treated as a delivery — they have outcomes, they update the runbooks, they reveal operational gaps that get tracked.

Level 5 — Intelligent. Incident patterns are analysed across quarters and surfaced to the operating model. Recurring patterns identify systemic gaps that get fixed at the operating model level. Detection precedes user impact for most categories — the certificate-issuance pipeline produces signals before the service breaks. The expiry-incident category, the most preventable, becomes rare.

Most enterprises sit at level 1 or 2 because they treat certificate incidents as a subset of service incidents rather than as their own discipline. Progression to level 3 is achievable within a quarter once the runbooks are written. Progression to level 4 requires the tabletop discipline that organisations defer because the exercises feel like overhead — they aren't, until the unexercised runbook fails in production.

Further reading within this cluster

- Enterprise PKI operating model — the pillar page

- Certificate governance and the steering function

- Certificate discovery in practice

- Certificate issuance workflows

- Certificate renewal operations

- Certificate revocation operations

- Platform onboarding for certificate automation

- Trust-store management

- Operational vs security logging for PKI

- Change management for PKI operations