Platform Onboarding for Certificate Automation

Every enterprise estate is multi-platform. Public cloud (typically two or three providers), on-premise infrastructure, container platforms, identity systems, application-specific certificate stores. Each is a distinct integration surface for the central certificate lifecycle management function. Onboarding new platforms is a recurring operational activity, not a one-time project.

Part of: Enterprise PKI Operating Model — the pillar page for the operations library.

The right approach treats platform onboarding as a repeatable pattern — defined steps, known failure modes, standard tooling — so that the team that integrated AWS three years ago can integrate the next platform in weeks rather than months. The wrong approach treats each platform as a unique engagement, rebuilds the integration each time, and produces an estate where the operations team understands AWS in detail and Azure barely at all.

PKI Health Radar

Drag the sliders to assess your current posture — scores update instantly.

Why platform onboarding is its own operational domain

It is tempting to fold platform onboarding into general issuance or general operations. It deserves separate treatment for three reasons:

Each platform has a distinct integration architecture. AWS Certificate Manager, AWS Private CA, Azure Key Vault, GCP Certificate Manager, Active Directory Certificate Services, Kubernetes (cert-manager and beyond), HashiCorp Vault, application-specific stores (Java keystores, IIS, Apache, nginx, F5, Akamai). The integration patterns are not interchangeable.

Onboarding involves cross-team coordination. The platform team owns the platform, the certificate operations team owns the central CLM, the security team owns the policy. Onboarding requires alignment across all three. Without explicit coordination, the integration gets built but never actually used because the platform team didn't know it existed.

The work is recurring. New platforms are added regularly: a new cloud provider in a new region, a new container orchestration layer, a new application platform. The mature operating model has standardised the onboarding process so that recurring work is predictable.

The onboarding pattern

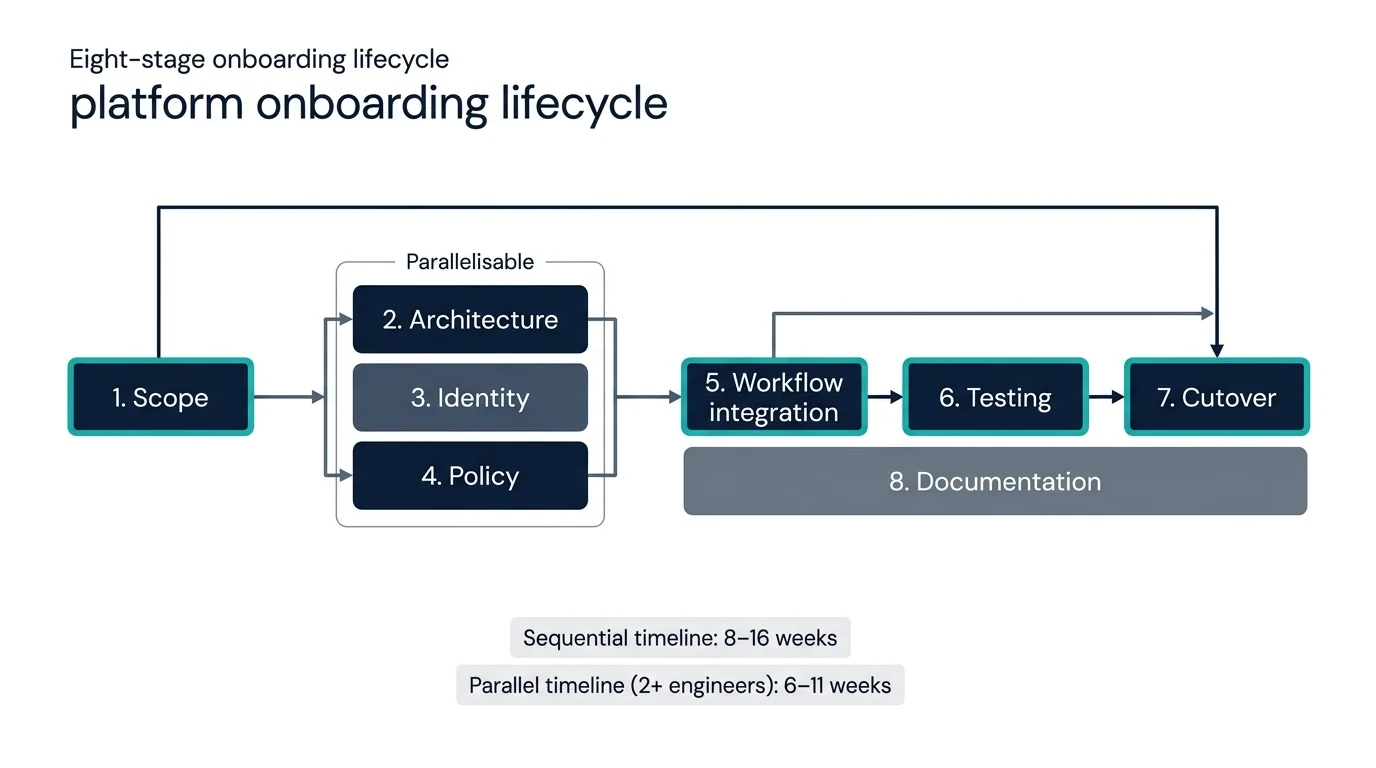

Eight stages comprise the platform onboarding lifecycle. Some are technical, some are organisational, all are required.

1. Scope definition. What does this platform need from the certificate operations function? Public TLS certificates only? Internal mTLS as well? Code signing? Document signing? The scope determines which integration surfaces matter and which can be deferred. Most platform onboardings start narrow (TLS only) and expand over time.

2. Integration architecture. How will certificates flow from the central CLM to this platform? Three patterns recur:

- Pull pattern. The platform queries the CLM via API to retrieve certificates. Common in cloud-native environments where the platform has a service identity that authenticates to the CLM.

- Push pattern. The CLM delivers certificates to the platform via an API the platform exposes. Common for traditional platforms with their own certificate store APIs.

- Native-CA pattern. The platform's native certificate authority is integrated into the central operating model — the platform issues certificates locally but the central CLM has visibility and policy control. AWS Certificate Manager, GCP Certificate Manager, Kubernetes' cert-manager all fit this pattern.

The architecture choice depends on the platform's capabilities and the scope defined in step 1.

3. Identity and authentication. How does the integration authenticate? Service-account credentials, mutual TLS, cloud-native identity (IRSA, Workload Identity), federated identity. The authentication mechanism has to be at least as strong as the certificates being managed — otherwise the integration is the weakest link.

4. Policy mapping. The platform supports a subset of the certificate policies the organisation has defined centrally. Map which central policies apply to this platform, which need adaptation, and which cannot be enforced through the platform's controls. Where policies cannot be enforced through the platform, document the gap and either accept it (low risk), compensate (additional monitoring), or restrict the platform's certificate types (don't allow it to issue the high-risk types). This mapping is the link to certificate governance.

5. Workflow integration. Connect the platform's certificate-management workflows to the central operating model. Issuance requests from this platform route through the central issuance workflow. Renewal events on this platform trigger central renewal automation. Discovery scans cover this platform's certificate stores. Logging from this platform feeds the central monitoring.

6. Testing. End-to-end testing of issuance, renewal, revocation, discovery, and incident response on this platform. Testing in a non-production instance of the platform, with a representative service load. Testing is the step most often shortcut, and testing failures are where most onboarding problems originate.

7. Production cutover. Move from test to production. Initial production use is typically a single low-risk service, with monitoring and rollback ready. Expansion to broader production use happens over weeks as confidence builds.

8. Documentation and handover. The integration is documented to the standard required by the operations team's runbooks. The platform team has the operational documentation they need to use the integration. The certificate operations team has the integration documentation they need to support it.

The eight stages are sequential at the architecture level (you can't test before you've built) but parallel within stages — and crucially, stages 2, 3, and 4 parallelise with each other when the team has dedicated capacity. A typical onboarding for a new cloud provider takes 8–16 weeks elapsed time sequentially; with stages 2–4 running in parallel and stage 8 absorbed alongside 5–7, the same scope compresses to 6–11 weeks. A new tenant within an already-onboarded platform takes days. The compression is real but bounded — testing and cutover dominate the critical path and do not compress with additional engineers.

Stage breakdown

Dominant cost driver: Testing

Testing is your dominant cost driver. This is the right place for cost — testing depth determines whether the integration survives production. Resist the temptation to compress testing; compress earlier stages instead.

Warnings

Platform-specific patterns

Each major platform has well-understood integration patterns. The summary below is not exhaustive — each platform deserves its own deep treatment — but it captures the dominant approach.

AWS. The native-CA pattern via AWS Certificate Manager (ACM) and AWS Private CA, integrated into the central CLM via APIs. Public certificates issued through ACM. Internal certificates issued through Private CA. Both are visible centrally through the AWS API; the central CLM treats AWS as one of its CAs. The integration surface is well-documented and stable. Known gotchas: AWS Private CA pagination tops out at 100 results per page and counts subordinate CAs separately; large estates require multi-page iteration with continuation tokens.

Azure. Native integration through Azure Key Vault for certificate storage, with Azure-managed CA for internal issuance. The pattern is similar to AWS but the API surfaces are different and Azure's identity model (managed identity, service principals) requires explicit handling. Cross-tenant scenarios add complexity. Known gotcha: soft-delete is enabled by default — certificates removed via API are recoverable for 90 days, which can interfere with re-issuance scenarios where the integration expects a clean removal.

GCP. Certificate Manager and Certificate Authority Service provide the native primitives. Workload Identity is the typical authentication path for in-cluster services. The integration surface is younger than AWS or Azure and has evolved more in recent releases — verify the current capabilities for the version you are integrating against. Known gotcha: Certificate Manager and Certificate Authority Service have separate per-project quotas; estate planning needs to consider both, and exceeding quota produces opaque errors.

Active Directory Certificate Services. Microsoft's enterprise PKI for internal Windows-centric estates. The integration is typically through the certificate enrolment APIs (REST endpoints introduced in Windows Server 2012 and later), with Group Policy controlling client-side autoenrolment. ADCS is operationally robust but requires careful template management; the central CLM integrates by managing templates and consuming the issuance audit logs. Known gotcha: REST API requests can be throttled by underlying IIS configuration — defaults are conservative for high-volume issuance and explicit IIS tuning is often required.

Kubernetes. cert-manager is the de facto standard, with native support for ACME issuers (public CAs), Vault, AWS Private CA, GCP Certificate Authority Service, and others. The integration with the central CLM is typically through the upstream issuer rather than directly with cert-manager — cert-manager handles in-cluster automation, the upstream issuer is what the central CLM controls. Known gotcha: cert-manager reconciliation loop has known scaling issues above approximately 1,000 certificates per cluster; large clusters benefit from sharding into multiple cert-manager instances.

HashiCorp Vault. Vault's PKI secrets engine acts as both an issuance interface (cert-manager and others issue through it) and a CA (it can be the issuing CA for internal certificates). Integration with the central CLM is bi-directional: the CLM may treat Vault as one of its CAs, and Vault may treat the CLM as one of its issuance sources. Known gotcha: the PKI secrets engine has a default max TTL that overrides per-role TTL configurations — easy to miss until issuance fails for non-obvious reasons.

Application platforms (Java, .NET, Apache, nginx, IIS, F5, Akamai). Platform-specific certificate stores with platform-specific integration patterns. Java keystores require keytool or programmatic JKS management. IIS uses the Windows certificate store. F5 has its own certificate management; F5 certificate-key associations must be explicitly maintained — orphaned keys are common. Akamai integrates via its API; tokens must be rotated quarterly and trust chains must be uploaded explicitly.

The platform has a native CA. Native-CA pattern is the right choice — the platform issues certificates locally and the central CLM exercises policy oversight without becoming the issuance bottleneck.

Cloud-native identity (IRSA / Workload Identity / Managed Identity). Strongest option — no shared credentials.

Known gotchas — AWS Private CA

These are recurring issues teams encounter with this platform. They are not prominent in vendor documentation but consistently surface during onboarding.

1. CreateCertificateAuthority rate limit

CreateCertificateAuthority has a hard 1-second rate limit during initial provisioning. Bulk CA setup scripts must respect this or fail with opaque ThrottlingException errors.

2. Pagination counts subordinates separately

ListCertificates pagination is 100 per page but counts subordinate CAs separately from issued certificates. Discovery integrations need to iterate both spaces explicitly.

3. CA hierarchy ordering

CA hierarchy operations (root CA + subordinates) require explicit ordering — root must be active before subordinates can issue. Automation that creates the hierarchy in parallel will fail intermittently.

Alternative patterns considered

What determines the right onboarding approach

Three parameters shape the onboarding strategy:

Platform maturity. Mature platforms (AWS, Azure, ADCS) have stable APIs, well-documented patterns, and existing integration libraries. Newer platforms (GCP Certificate Manager, recent versions of cert-manager) have evolving APIs and may require rework as they stabilise. The onboarding plan accounts for the platform's lifecycle position.

Scope creep tolerance. Will the initial onboarding hold its scope, or will the team be asked to expand mid-flight? Cloud providers typically expand: an initial onboarding for production TLS quickly grows to include staging, dev, and the long tail of edge cases. The right approach defines explicit phases with separate scopes for each, rather than fighting scope expansion.

Risk tolerance and rollback strategy. What happens if the integration fails in production? Cloud-native services with good rollback (revert to manual configuration, route around the integration) tolerate aggressive cutover. Legacy services with no rollback path require staged migration with parallel operation periods. The risk profile determines the cutover plan.

Where platform onboarding breaks

No standardised pattern. Each platform integration is built bespoke. The team that did the AWS integration has moved on; the new team is rebuilding from scratch for Azure. The cumulative cost is enormous and the operational consistency suffers. The fix is to extract the common pattern after the second integration and apply it to every subsequent one.

Integration without ongoing ownership. The platform was onboarded; the integration was deployed; the team responsible for it disbanded. The integration is running but unmaintained. When the platform's API changes, the integration silently breaks. The fix is to assign explicit ongoing ownership for every integration before declaring onboarding complete.

Policy gaps not surfaced. The platform doesn't support the central policy. The team integrated anyway, with a quiet understanding that the gap exists. Compliance discovers the gap during the next audit. The fix is to document policy gaps explicitly during step 4 — surface them to governance and either accept, compensate, or restrict.

Production cutover without testing depth. The integration was tested with a few certificates and declared ready. Production exposed edge cases — high-volume issuance, rate limits, error-handling paths — that hadn't been exercised. The fix is to test at production scale before cutover, not to discover scale issues in production.

Documentation that doesn't survive the original team. The original integrator wrote the docs at a level that made sense to them. The next person to support the integration cannot follow it. The fix is to write documentation against a standard runbook template that someone unfamiliar with the integration can follow.

Maturity progression for platform onboarding

The five-level PKI operational maturity model introduced in the pillar maps onto the platform onboarding domain as follows. Onboarding is one of the few domains where maturity is genuinely measurable as cycle time per integration.

Level 1 — Ad-hoc. First platform integration was bespoke. Each subsequent integration starts from scratch — different engineer, different approach, different documentation style. The team has done two or three integrations and each took three to six months. No common framework exists.

Level 2 — Tooled. Multiple platform integrations exist but they don't share patterns. Some teams use cert-manager, others use a vendor CLM, others have built bespoke automation. The integrations work individually but are operationally inconsistent — each requires different runbook knowledge to support. Cycle time for new integrations: 12–24 weeks because every integration rediscovers the same problems.

Level 3 — Operationalised. A documented onboarding pattern exists, derived from the first three platform integrations. New integrations follow the eight-stage lifecycle. The pattern is recognisable across integrations even if the implementation details differ. Cycle time for new integrations: 8–12 weeks for a new platform, 4–6 weeks for a variant of an already-onboarded platform.

Level 4 — Integrated. The onboarding pattern has been applied to the tenth platform with cycle time under four weeks. The pattern itself is now refined by repetition — the team has captured platform-specific gotchas, common policy gaps, and standard testing scripts. New platform integration is a planned, predictable activity that fits in a standard quarterly programme cycle. Cross-team coordination is templated rather than negotiated each time.

Level 5 — Intelligent. Platform onboarding is largely self-service for the platform team. The certificate operations team provides the framework, the platform team executes against it with operations as a coach rather than a builder. New platforms come through the framework rapidly because the framework anticipates the common patterns and surfaces only the platform-specific decisions that genuinely require central judgement.

Most enterprises sit at level 1 or 2 because they have not yet completed enough integrations to have a pattern worth standardising. The progression to level 3 happens after the third integration if the team takes the time to extract the pattern. The progression to level 4 takes ten integrations of disciplined refinement; many organisations stall at level 3 because each new integration is treated as urgent rather than as another opportunity to refine the pattern.