Trust-Store Management

A trust store is the list of certificate authorities a service trusts to issue certificates. When the service receives a certificate, it checks whether the issuing CA is in its trust store. If yes, the certificate is accepted. If no, the connection fails. Trust stores are foundational to TLS and to the broader PKI — and they are managed almost nowhere with the rigour they require.

Part of: Enterprise PKI Operating Model — the pillar page for the operations library.

Most enterprise certificate operations focus on the certificates themselves: issuance, renewal, revocation. The trust stores those certificates depend on are treated as configuration, not operational state. This works until a root CA is rotated, distrusted, or compromised — at which point every service in the estate that hasn't updated its trust store breaks simultaneously.

PKI Health Radar

Drag the sliders to assess your current posture — scores update instantly.

What a trust store contains and why it changes

A trust store contains:

- Root CA certificates. The top of each certification path. A service trusts a root CA, which means it trusts everything that root has signed.

- Intermediate CA certificates. The CAs that the roots have delegated issuance to. Most services don't strictly need intermediates in their trust store (they can be sent at connection time), but practical configurations often include them.

- Trust anchors specific to the deployment. Internal CAs that the organisation operates, partner CAs trusted for specific federations, special-purpose CAs for narrow use cases (code signing, document signing, S/MIME).

Trust stores change for reasons including:

Root rotation. A root CA is reaching end-of-life. The CA operator issues a new root, signs intermediates with the new root, and distributes the new root for inclusion in trust stores. Existing services need to add the new root before the old one stops being usable.

CA distrust events. A root CA is removed from major trust programs because of compromise, misissuance, or operator failure. The Symantec distrust (2017–2018), DigiNotar (2011), and Entrust (2024–2025) are the major examples. Affected services need to remove the distrusted CA and replace certificates issued from it.

New CA additions. The organisation begins using a new CA — a new public provider, a new internal CA stood up for a project, a partner CA for a new federation. The trust stores of services that consume certificates from this CA need to be updated.

Internal CA rotation. An internal CA (typically used for mTLS or service identity) is rotated as part of normal lifecycle management. The new CA replaces the old; trust stores need both during the transition window.

Cryptographic algorithm changes. A CA hierarchy is being updated to support new algorithms (post-quantum, ECDSA migration, hash algorithm changes). New CAs with the new algorithms are introduced; old ones may be retired.

Each of these is a change event that affects every service whose trust store includes the affected CA. The blast radius can be very large.

The operational scope

A complete trust-store operating model has four components.

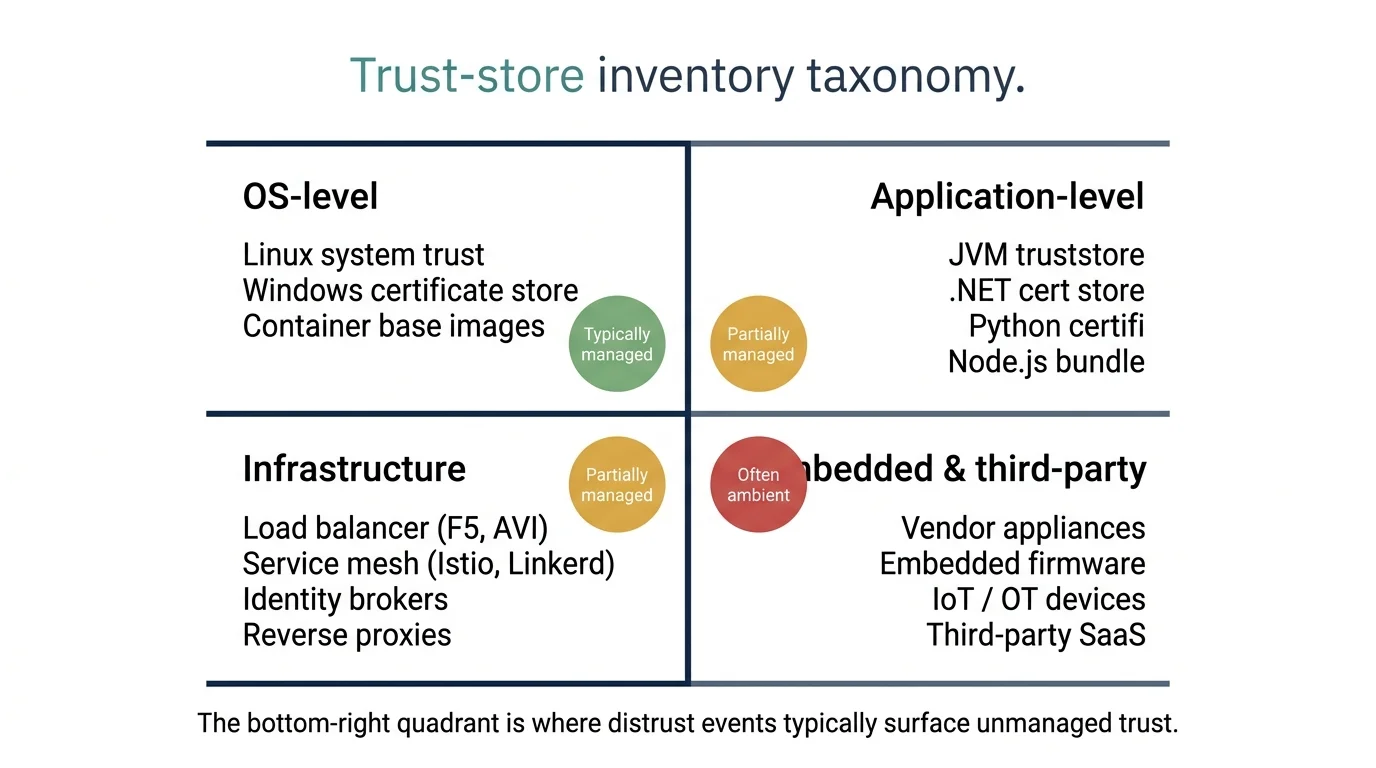

Inventory. What trust stores exist across the estate, what each contains, where they are stored, and which services depend on each. Most enterprises have not constructed this inventory and discover the answer is large: every operating system instance has at least one OS-level trust store, every Java application has a JVM trust store, every container image has an OS-level trust store, every browser has its own trust store, every load balancer has its trust store, every service mesh has its trust configuration, every identity broker has its federation trust list, and every embedded device or third-party appliance has whatever trust list the vendor shipped. The inventory is the foundation for everything else — and is where certificate discovery intersects this domain.

Authoritative source. What is the source of truth for what should be in each trust store? The operating model defines an authoritative trust list for each category of service — typically segmented by trust scope (public-internet-facing, internal-services, federated-partners, code-signing) — and a process for keeping each list current.

Distribution mechanism. How does the authoritative trust list get into the actual trust stores deployed across the estate? Configuration management (Ansible, Puppet, Chef, SaltStack), container image baking, native OS update mechanisms, application-level configuration, manual update for the long tail. The distribution mechanism varies by service type; the operating model documents which mechanism applies to which estate segment.

Change coordination. When the trust list changes, how are the changes coordinated across the estate? This is the operational core. Changes that add a CA can usually deploy gradually with low risk. Changes that remove a CA can break services and require coordination with the teams that depend on the removed CA.

Inventory (12 types)

⚠ High-risk segment detected

Your inventory includes 2 embedded or third-party trust-store types. This segment surfaces unmanaged trust during distrust events because the trust state is controlled by vendors rather than the operations team. Inventory at vendor onboarding rather than after the event.

The change-coordination problem

Trust-store updates are change events with three distinguishing characteristics:

Wide blast radius, low per-service impact. A trust-store change touches every service that uses that trust store. For an OS-level trust store, that is potentially every service running on that OS. The per-service operational impact is small (a configuration update, sometimes a service restart) but the coordination requirement scales with the breadth.

Asymmetric risk on additions versus removals. Adding a CA to a trust store is low-risk: the worst case is that a CA is trusted that wasn't needed. Removing a CA from a trust store is high-risk: services that were using certificates from that CA now fail. The change-management processes for additions and removals are different.

Long propagation tails. A trust-store change deployed today is fully effective only when every affected service has been updated. Some services are updated immediately (config-managed cloud-native services), some on the next deployment cycle (container images), some on the next maintenance window (traditional servers), some never (services that are running but no longer being maintained). The change is “complete” only when the tail is exhausted.

The operational pattern that handles all three: deploy additions broadly with low ceremony; deploy removals with explicit phasing — discovery, communication, transition window, removal, verification. This connects directly to change management for PKI operations.

The transition window pattern

Removing a CA from production trust stores follows a phased pattern designed to minimise the risk of service disruption:

1. Inventory dependencies. Identify every service that has issued certificates from the CA being removed. Each of these services needs to migrate to a different CA before the trust-store removal takes effect. The inventory comes from issuance records (which the CLM has) and discovery (which the operations team runs).

2. Communicate the timeline. Notify all teams owning affected services. The notification includes the deadline for migration, the alternative CAs available, and the support process for the migration. Multiple notifications spaced over the transition window — typical pattern: announcement at 90 days, reminder at 60 days, escalation at 30 days, final warning at 14 days.

3. Migrate the certificate inventory. Affected services migrate their certificates from the old CA to a new one. This is normal certificate-replacement work, scaled to the size of the affected inventory and following the standard issuance workflow and renewal processes.

4. Verify migration completion. Active verification that no certificates from the old CA remain in production use. The CLM's issuance records combined with discovery scanning produce the verification.

5. Remove the CA from trust stores. Once verification confirms no production dependencies, the CA is removed from the trust list and the change is propagated through the distribution mechanism.

6. Monitor for residual failures. The first weeks after removal are monitored for failures from services that were missed in the inventory. The runbook for the residual failure is to add the CA back temporarily, allow the affected service to migrate, and re-attempt the removal.

The total elapsed time depends on the size of the affected inventory and the velocity of the teams owning affected services. Typical transition windows: 90 days for cloud-native estates with fast change cycles, 6–12 months for regulated enterprise estates with longer change windows, 12–24 months for estates including embedded systems or third-party software.

Composition by category

Residual failure budget: 10 services

Budget is realistic for the composition. Verification effort calibrated:

- Pre-removal verification sampling: 46 services

- Residual monitoring window: 60 days

Communication cadence

- T-624dAnnouncement

- T-90dReminder

- T-60dEscalation

- T-30dEscalation

- T-14dFinal warning

High-risk categories

Your composition includes 2 high-risk categories. These are most likely to surface unmanaged dependencies during the residual monitoring window. Pre-removal verification needs to be more thorough for these segments — sample more services, monitor longer, have alternative-CA paths ready.

Where trust-store management breaks

Trust store as ambient configuration. No-one is responsible for the trust store; it is whatever the OS image had at install time, plus whatever has been added by automation no-one is currently watching. The first sign of a problem is a service breakage. The fix is explicit ownership and an authoritative source.

Update mechanism that bypasses operational visibility. OS vendors push trust-store updates as part of routine patching. The certificate operations team is unaware these updates have happened. When a service breaks because a CA was removed in a routine OS update, the troubleshooting takes hours because the change is invisible to the team most likely to recognise it. The fix is monitoring the trust-store state directly, not assuming knowledge of what should be there.

Internal CA rotation without coordination. The internal CA was rotated by the team that runs it. The services that consume certificates from it weren't notified. Six weeks later, services start failing as old certificates expire and new ones (signed by the new CA) aren't trusted. The fix is treating internal CA rotation as a trust-store change event with the same coordination as any other.

No verification after removal. The CA was removed from the trust stores per the runbook. No-one confirmed the removal actually propagated everywhere. Services that retained the old trust list continue to trust certificates that should no longer be trusted. The fix is active verification — sample services across the estate to confirm they reflect the new trust state.

Browser-specific trust changes ignored. Major browsers (Chrome, Firefox, Safari, Edge) maintain their own trust programs and announce distrust events on their own timelines. A CA distrusted by Chrome may still be trusted by other clients. Services serving browser traffic need to migrate; services with internal traffic may not. The fix is to classify services by client base in the inventory, subscribe to the major browser trust-program announcements, and route distrust events to the affected segment of the estate rather than treating them as estate-wide.

Embedded and third-party trust stores discovered post-event. The estate includes vendor appliances, embedded firmware, IoT devices, or third-party SaaS that pin specific CAs in trust stores the operations team does not control. These are usually invisible until a distrust event causes a service-disruption incident, at which point the operations team discovers them by tracing failures. The fix is proactive inventory of vendor-controlled trust dependencies as part of vendor onboarding, not after a vendor has shipped a product into production.

Maturity progression for trust-store management

The five-level PKI operational maturity model introduced in the pillar maps onto the trust-store domain as follows.

Level 1 — Ad-hoc. Trust stores are ambient configuration. No inventory exists; no-one is responsible. Trust-store changes happen as side effects of OS patching, container image updates, or vendor decisions, with the operations team unaware until something breaks. The first distrust event reveals how little the team knew about the trust state of the estate.

Level 2 — Tooled. Some trust stores are managed — typically the ones associated with the central CLM or with cloud-native platforms that have visible trust configuration. The long tail (legacy servers, embedded systems, third-party software) remains ambient. Distrust events are handled by reactive triage rather than systematic response. The team knows the trust stores it manages but discovers others by surprise.

Level 3 — Operationalised. Trust stores are inventoried across the estate, including OS-level, application-level, infrastructure, and (importantly) embedded and third-party. An authoritative trust list exists, segmented by trust scope. Distribution mechanisms are documented per estate segment. The transition-window pattern for removals is established. The team can answer the question “which services trust this CA?” without an emergency discovery exercise.

Level 4 — Integrated. Trust-store management has three structural elements operating together: an authoritative trust list, automated distribution to the managed segments of the estate, and quarterly verification that the deployed trust state matches the authoritative list. Drift is detected and resolved as part of routine operations rather than during incidents. Distrust events are handled via the standard transition-window pattern; the runbook is exercised, not improvised.

Level 5 — Intelligent. Trust-store state is integrated with broader infrastructure observability. Anomalies in trust state — a service trusting more or fewer CAs than the authoritative list expects — surface to the steering function. Vendor-controlled trust dependencies are catalogued as part of supplier risk management. CA lifecycle events are anticipated and migrations planned ahead of vendor deadlines, rather than reacting to announcements.

Most enterprises sit at level 1 on trust-store management because the domain is operationally invisible until an event makes it visible. Progression to level 3 typically follows a distrust event that exposes the gap; the right response is to use the event as the impetus for systematic change rather than treating it as a one-time triage. Progression to level 4 takes a deliberate quarter-on-quarter discipline that most organisations defer because trust-store work has no current pain when it is going well.

Further reading within this cluster

- Enterprise PKI operating model — the pillar page

- Certificate governance and the steering function

- Certificate discovery in practice

- Certificate issuance workflows

- Certificate renewal operations

- Certificate revocation operations

- Platform onboarding for certificate automation

- Change management for PKI operations