Post-Quantum Cryptography Migration: A Decision-Maker's Roadmap

Part of the Post-Quantum PKI Migration Guide

NIST published its first three post-quantum cryptography standards — FIPS 203, FIPS 204, and FIPS 205 — in August 2024. Under the transition timeline defined in NIST IR 8547 (November 2024), quantum-vulnerable algorithms at 112-bit security strength will be deprecated by 2030 and all RSA/ECC algorithms disallowed by 2035. NSA's CNSA 2.0 moves faster: all new National Security Systems acquisitions must be CNSA 2.0 compliant by January 1, 2027, with custom applications and legacy equipment updated or replaced by 2033.

This is not a theoretical exercise. If your organization handles data with a secrecy requirement beyond 2030, or sells into federal, defense, financial, or healthcare verticals, you are already inside the migration window. The question is not whether to act but what to decide first, what to defer, and where hybrid strategies buy you time without creating technical debt.

PKI Health Radar

Drag the sliders to assess your current posture — scores update instantly.

This page provides a decision framework for CISOs, VPs of Engineering, and PKI architects. It covers the five decisions that determine whether a PQC migration succeeds or stalls: scoping the cryptographic inventory, selecting algorithms, choosing a hybrid certificate strategy, evaluating vendor readiness, and phasing the rollout.

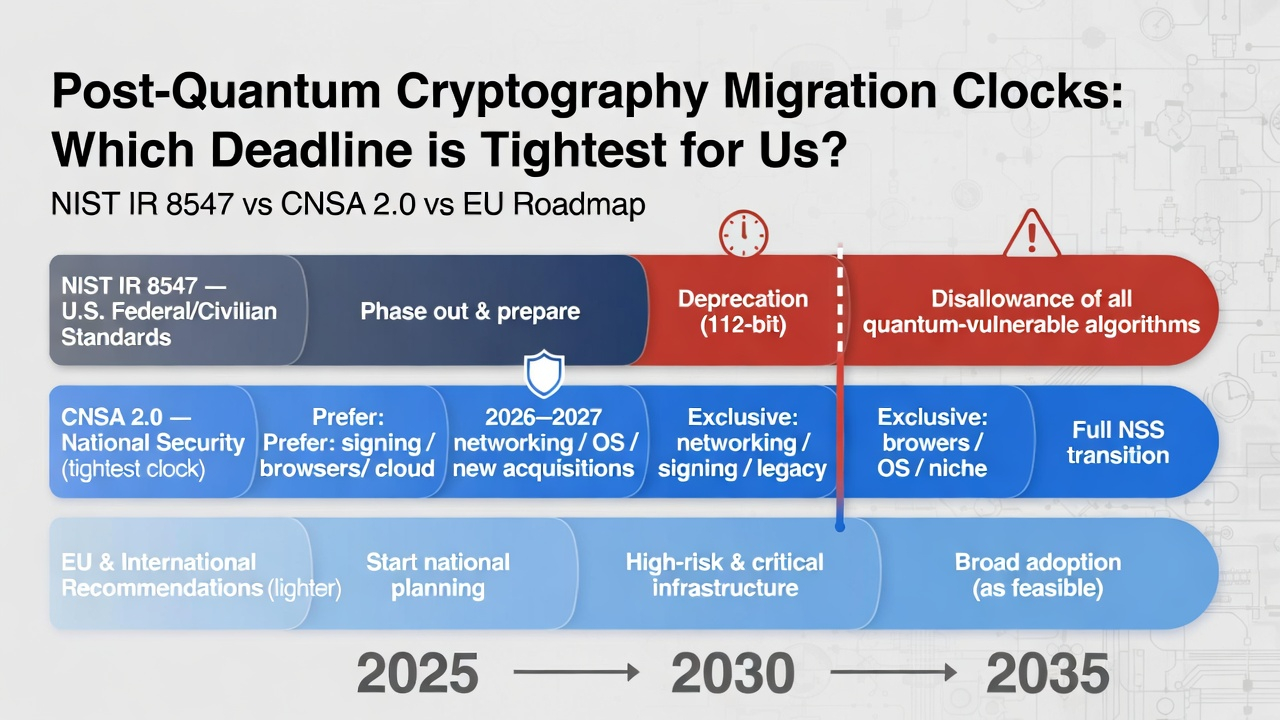

The Regulatory Clock: Key Deadlines You Cannot Ignore

Three overlapping compliance frameworks set the pace for PQC migration, and they do not all agree on timelines.

NIST IR 8547 (published November 2024) establishes the broadest mandate. Algorithms at 112-bit security (RSA-2048, ECC P-256) are deprecated after 2030 — meaning they should not be used for new deployments. All quantum-vulnerable public-key algorithms, including higher-strength variants like RSA-3072 and P-384, are disallowed after 2035. Symmetric algorithms at 112-bit security (3DES, etc.) follow the same 2030 deprecation. NIST's stated expectation is that organizations begin implementing PQC standards now, not at the deadline.

CNSA 2.0 applies to National Security Systems but sets expectations that ripple through the defense supply chain. Software and firmware signing should already prefer CNSA 2.0 algorithms; they become mandatory by 2030. Web browsers, cloud services, and operating systems must support and prefer CNSA 2.0 by 2025–2027, with exclusive use required by 2033. Networking equipment (VPNs, routers) must transition by 2030. Custom applications and legacy equipment must be updated or replaced by 2033. If you are a vendor or subcontractor to the US government, these deadlines are your deadlines. See PQC Timeline & Mandates for the full CNSA 2.0 technology-specific milestone table.

EU and international frameworks are less prescriptive but converging. The European Commission has recommended switching to PQC "as swiftly as possible," with a coordinated roadmap targeting transition start by end of 2026 and critical infrastructure protection by end of 2030. ANSSI (France) and BSI (Germany) have issued algorithm guidance that largely aligns with NIST but with some divergence on hybrid requirements and algorithm preferences. Australia has targeted 2030 for PQC adoption in government systems.

The practical implication: if you serve multiple jurisdictions, you need algorithm selections and certificate strategies that satisfy the strictest applicable timeline — which is typically CNSA 2.0 for defense-adjacent organizations and NIST IR 8547 for everyone else.

Decision 1: Scoping the Cryptographic Inventory

Every PQC migration guide begins with "do a cryptographic inventory." Few explain what that actually means at enterprise scale or how to scope it so the effort does not consume the entire migration budget.

What the Inventory Must Capture

A useful PQC inventory is not a list of certificates. It is a map of every place your organization uses asymmetric cryptography, including contexts that certificate lifecycle management tools do not typically discover:

Certificate-based PKI is the most visible layer — TLS certificates, code signing certificates, client authentication certificates, S/MIME certificates, device identity certificates. Your CLM platform should already have visibility here. The PQC-relevant data points are the algorithm and key size for each certificate, the issuing CA chain (because you need PQC-safe roots, not just PQC-safe leaf certs), and the remaining validity period (certificates expiring before 2030 may not need PQC migration at all).

Non-certificate asymmetric crypto is where most organizations have blind spots. SSH keys, PGP/GPG keys, JWT signing keys, API authentication tokens using RSA or ECDSA, SAML assertion signatures, Kerberos PKINIT configurations, and custom application-level encryption using libraries like OpenSSL or Bouncy Castle. These are not in your CLM tool. They require network scanning, code analysis, and configuration audits.

Embedded and IoT device cryptography is the hardest category. Devices with burned-in keys, firmware signed with RSA keys, and protocols that negotiate key exchange at connection time. The critical question for each device class: can it accept a firmware update that includes PQC algorithm support? If not, the device is on a hardware replacement timeline, and that timeline must start now.

Data-at-rest encryption keys that use asymmetric key wrapping. If you use RSA key wrapping for database encryption keys, backup encryption, or key escrow, those are quantum-vulnerable even though the underlying symmetric encryption (AES-256) is not.

How to Scope Without Boiling the Ocean

Prioritize by data sensitivity and secrecy lifetime. The "harvest now, decrypt later" threat means that data encrypted today with quantum-vulnerable key exchange is at risk if it must remain confidential past the point where a cryptographically relevant quantum computer (CRQC) exists. Intelligence community estimates for CRQC timelines vary, but the planning assumption built into NIST and NSA guidance is that it could happen within the next decade.

Work backward from your data classification policy. If you have data classified as needing 25-year confidentiality, the key exchange protecting that data today is already overdue for PQC migration. Data with a 5-year secrecy requirement has more runway — but not much, given that migration itself takes years.

For authentication-only use cases (code signing, TLS server authentication, document signing), the threat model is different. An attacker cannot retroactively forge a signature they did not capture in transit. The risk materializes when a CRQC can forge signatures in real time — which is a later threat than harvest-now-decrypt-later, but one that still falls within NIST's 2035 disallowance window.

Decision 2: Algorithm Selection — ML-KEM, ML-DSA, and SLH-DSA

NIST's three finalized standards each serve a distinct purpose. The algorithm you choose depends on the use case, not on a blanket organizational preference.

FIPS 203: ML-KEM (Key Encapsulation)

ML-KEM replaces RSA key exchange and ECDH for establishing shared secrets. It is based on the Module Learning with Errors (MLWE) problem and derived from the CRYSTALS-Kyber submission.

ML-KEM has three parameter sets. ML-KEM-512 provides NIST security category 1 (roughly equivalent to AES-128) with a 800-byte public key and 768-byte ciphertext. ML-KEM-768 targets category 3 (AES-192 equivalent) with a 1,184-byte public key and 1,088-byte ciphertext. ML-KEM-1024 reaches category 5 (AES-256 equivalent) with a 1,568-byte public key and 1,568-byte ciphertext.

Decision guidance: ML-KEM-768 is the general-purpose recommendation for most TLS and key exchange deployments. CNSA 2.0 requires ML-KEM-1024 for National Security Systems. ML-KEM-512 is suitable only where bandwidth constraints are severe and the security requirement is genuinely at the 128-bit level. NIST has also selected HQC (announced March 2025) as a code-based backup KEM using different mathematical foundations than ML-KEM, with a draft standard expected in 2026 and finalization in 2027.

FIPS 204: ML-DSA (Digital Signatures — Primary)

ML-DSA is the primary standard for digital signatures, replacing RSA and ECDSA signatures. It is derived from CRYSTALS-Dilithium and based on module lattice problems.

The key size increase over classical algorithms is substantial. ML-DSA-44 (category 1) has a 1,312-byte public key and 2,420-byte signature — compared to 32 bytes and 64 bytes respectively for Ed25519. ML-DSA-65 (category 3) produces 1,952-byte public keys and 3,309-byte signatures. ML-DSA-87 (category 5) reaches 2,592-byte public keys and 4,627-byte signatures.

Decision guidance: ML-DSA-65 is the appropriate default for most certificate-based PKI, TLS authentication, and code signing. ML-DSA-87 is required under CNSA 2.0 for NSS. ML-DSA-44 is adequate for internal-only systems where performance on constrained devices outweighs the additional security margin. NIST is also developing FIPS 206 based on FALCON (to be renamed FN-DSA), which offers smaller signatures (~666 bytes at category 1) but requires careful floating-point handling that makes implementation more error-prone. The FN-DSA draft was submitted for review in August 2025, with finalization expected late 2026 or early 2027.

FIPS 205: SLH-DSA (Digital Signatures — Conservative Backup)

SLH-DSA is derived from SPHINCS+ and uses stateless hash-based cryptography — a fundamentally different mathematical foundation than ML-DSA's lattice problems. Its security relies solely on the properties of hash functions, which are extremely well-understood.

SLH-DSA's tradeoff profile is the inverse of ML-DSA: very small public keys (as low as 32 bytes) but very large signatures (minimum 7,856 bytes). Signing is also significantly slower.

Decision guidance: SLH-DSA is not a general-purpose replacement for ML-DSA. Its role is as a hedge — if a breakthrough attack against lattice-based cryptography is discovered, SLH-DSA provides a fallback that relies on entirely different assumptions. Use SLH-DSA for root CA certificates, firmware signing for long-lived infrastructure, and any context where you want maximum conservatism and can tolerate the signature size overhead. Important CNSA 2.0 constraint: SLH-DSA is not included in CNSA 2.0 and is not approved for National Security Systems use. If your systems fall under CNSA 2.0 requirements, use LMS/XMSS (NIST SP 800-208) as the approved hash-based signature option, not SLH-DSA.

Algorithm Selection by Use Case

- TLS key exchange: ML-KEM-768 (or ML-KEM-1024 for CNSA 2.0). Chrome and Cloudflare have already deployed hybrid X25519+ML-KEM-768 in production.

- TLS server authentication: ML-DSA-65 for leaf certificates. Consider SLH-DSA for root and intermediate CA certificates if you want algorithm diversity in the trust chain — but note that SLH-DSA is not CNSA 2.0 compliant; for NSS, use ML-DSA throughout the chain.

- Code signing and firmware signing: ML-DSA-65 or ML-DSA-87. For CNSA 2.0 compliance, use LMS/XMSS (NIST SP 800-208) as a near-term option — these stateful hash-based schemes are already standardized and recommended by NSA for immediate deployment, with ML-DSA taking over as implementations mature. SLH-DSA is appropriate where you need a stateless hash-based scheme for code signing in environments where managing LMS/XMSS state is impractical, but only for non-NSS use cases.

- Document signing (S/MIME, PDF): ML-DSA-65. Signature size will increase document sizes but is manageable for typical enterprise workflows.

- IoT device identity: ML-DSA-44 where device constraints require it, with awareness that this provides category 1 security only. Evaluate whether devices can support ML-DSA-65 before defaulting to the smaller parameter set.

Decision 3: Hybrid Certificate Strategy

The transition to PQC will not happen as a single switchover. There will be a multi-year period where some endpoints support PQC and others do not. Hybrid certificate strategies bridge this gap — but the options are more complex than most vendor marketing suggests.

Understanding the Hybrid Approaches

Four distinct approaches are under active development and standardization:

Composite certificates combine a classical algorithm (RSA or ECDSA) and a PQC algorithm (ML-DSA) into a single composite public key with a composite signature. The IETF LAMPS working group draft for Composite ML-DSA is in working group last call and expected to become an RFC in 2026. The "AND" validation model (both signatures must verify) is the standard direction. Composite certificates present as a single algorithm OID, giving them protocol backwards compatibility — they work in protocols that are not explicitly hybrid-aware.

Catalyst certificates (also called X.509 Alternative Keys, standardized in ITU-T X.509) use a classical outer certificate structure with PQC keys and signatures embedded in non-critical extensions. Legacy clients validate the classical portion and ignore the extensions; PQC-aware clients validate both. Keyfactor's EJBCA supports this approach as "Chimera certificates."

Chameleon certificates encode the PQC certificate as a delta against the classical certificate, reducing size at the cost of increased complexity. They are the most compact hybrid option but also the least mature.

Parallel certificate chains issue separate classical and PQC certificates for the same identity, validated independently. This avoids the complexity of hybrid formats but requires managing two complete PKI hierarchies and doubles certificate management overhead.

Which Strategy Fits Your Organization

- If you control both endpoints (e.g., internal microservices, device-to-cloud communication, API-to-API), migrate directly to pure PQC certificates. Hybrid complexity is unnecessary when you can update both sides simultaneously. This is the simplest path and the one with the least technical debt.

- If you need backward compatibility with legacy clients that cannot be updated (e.g., public-facing TLS, IoT fleets with mixed firmware versions), composite certificates are the most practical choice once the RFC is finalized. They offer protocol backwards compatibility without requiring changes to TLS or CMS implementations, and the incremental deployment model (devices can parse the classical portion first, add ML-DSA support later) is well-suited to gradual rollouts.

- If you are in a standards-heavy or regulated environment and need to demonstrate compliance with specific hybrid schemes, evaluate which approach your CA vendor and HSM vendor support today. As of early 2026, Bouncy Castle supports composite, catalyst (X.509 Alternative), and chameleon formats. OpenSSL 3.5 with the OQS provider supports ML-DSA and SLH-DSA directly but has limited hybrid certificate support. As of March 2026, standardized OIDs for composite algorithms have not yet been issued by IANA — this is a real interoperability risk for early adopters.

- If you are adopting hybrid TLS key exchange (the "X25519+ML-KEM-768" approach already deployed by Google Chrome and Cloudflare), note that this addresses confidentiality only, not authentication. Hybrid key exchange and hybrid certificates solve different problems. You likely need both.

Certificate Size Impact

The shift from ECDSA to ML-DSA means certificates grow from roughly 500–800 bytes to 4,000–6,000 bytes depending on the parameter set and whether hybrid signatures are included. Certificate chains with three levels of ML-DSA-65 signatures will produce TLS handshakes that are meaningfully larger than today's (~12KB vs ~2.5KB for ECDSA chains). This matters for constrained environments, DTLS-based IoT protocols, and any system where certificate size affects connection latency. See PQC Impact on TLS & Certificates for quantified performance data. Test with realistic chain depths before committing to a parameter set.

Decision 4: Vendor Readiness Criteria

Evaluating whether your CLM platform, CA, HSM, and application stack are ready for PQC requires asking specific technical questions — not accepting a vendor's "quantum-ready" marketing claim at face value.

CA and PKI Platform Readiness

- Does the CA support issuing certificates with ML-DSA-44, ML-DSA-65, and ML-DSA-87 as the signing algorithm for both root/intermediate and leaf certificates? Support for "experimental" or "pre-standardized" Dilithium round 3 is not the same as support for the finalized FIPS 204 algorithm — OIDs and parameters changed between the draft and final versions.

- Does the CA support hybrid certificate issuance (composite, catalyst, or parallel chains)? If so, which format, and using which OIDs? Pre-standard OIDs used by Bouncy Castle (1.3.6.1.4.1.18227.2.1 for composite) will not be compatible with the final IANA-assigned OIDs.

- Can the CA issue ML-KEM certificates for key encapsulation use cases, or only signature certificates?

- Does the CA's certificate profile system support the larger key and signature sizes without truncation or field-length violations?

HSM Readiness

- Does the HSM support ML-DSA and ML-KEM key generation, signing, and verification in hardware? Some HSMs support PQC through firmware updates; others require hardware replacement. Verify whether PQC operations execute inside the HSM boundary or are offloaded to software — the latter defeats the purpose of using an HSM.

- Is the HSM firmware FIPS 140-3 validated with PQC algorithms included in the validation scope? As of early 2026, NIST's Cryptographic Algorithm Validation Program (CAVP) is accepting ML-DSA, ML-KEM, and SLH-DSA submissions, but FIPS 140-3 module validations with PQC are still limited. The average FIPS 140-3 validation currently takes over 500 days. This is a real procurement risk for compliance-driven organizations.

- Can the HSM handle the larger key sizes? ML-DSA-87 private keys are 4,896 bytes — roughly 50x larger than an ECDSA P-256 private key. HSMs with fixed key slot sizes may need configuration changes or firmware updates.

Application and Protocol Stack Readiness

- Does your TLS library support ML-KEM and ML-DSA? OpenSSL 3.x with the OQS provider, BoringSSL (used in Chrome), and Bouncy Castle all have varying levels of PQC support. Check the specific version you deploy, not the library's marketing page.

- Do your ACME clients support requesting certificates with PQC algorithms? If you use automated certificate lifecycle management, this is a blocking dependency. The concurrent shift to 200-day (March 2026) and 47-day (2029) public SSL/TLS certificate lifespans makes ACME automation mandatory infrastructure — ensure your ACME stack can handle PQC key types before the two requirements converge.

- Do your code signing, document signing, and email signing toolchains support ML-DSA signatures? Many enterprise signing workflows depend on tools (jarsigner, signtool, gpg) that do not yet support PQC.

- Can your monitoring and observability tools parse certificates with PQC algorithms without errors?

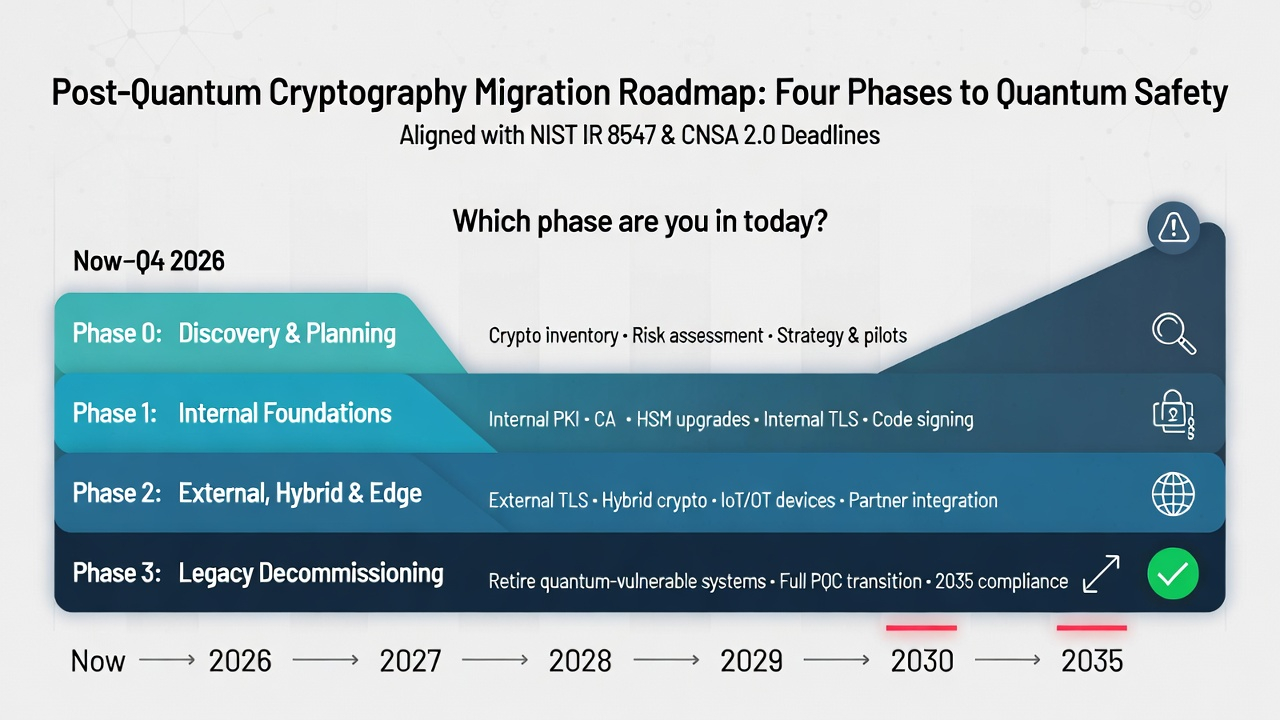

Decision 5: Phased Migration Framework

A PQC migration that attempts to convert everything simultaneously will fail. A phased approach, sequenced by risk exposure and technical dependency, is the only viable strategy for enterprises.

Phase 0: Foundation (Now – Q4 2026)

- Complete the cryptographic inventory for your highest-sensitivity data stores and authentication systems. Establish baseline metrics: how many asymmetric keys, what algorithms, what validity periods, which CA chains.

- Deploy a PQC test CA hierarchy using ML-DSA-65 for internal, non-production use. Issue test certificates, run them through your TLS stack, measure handshake latency and certificate chain size impact. Identify integration failures early.

- Enable hybrid key exchange (X25519+ML-KEM-768) on external-facing TLS endpoints where your CDN or load balancer supports it. This protects in-transit confidentiality against harvest-now-decrypt-later without changing your certificate infrastructure.

- Verify that your HSM vendor has a PQC firmware roadmap with CAVP validation timelines. If they do not, begin evaluating alternatives.

- Ensure your certificate automation infrastructure can handle the CA/Browser Forum's 200-day certificate lifespan (effective March 2026). The ACME automation and protocol-based enrollment you build for short-lived certificates is the same infrastructure that enables PQC certificate deployment at scale.

Phase 1: Internal PKI Migration (2027 – 2028)

- Migrate internal CA hierarchies to PQC. Stand up new root and intermediate CAs using ML-DSA-65 (or ML-DSA-87 if CNSA 2.0 applies). Cross-sign with your existing classical CAs during the transition.

- Begin issuing ML-DSA certificates for internal TLS (service-to-service mTLS, internal APIs). Since you control both endpoints, pure PQC certificates are appropriate — no hybrid complexity needed.

- Migrate code signing and firmware signing to ML-DSA-65 or LMS/XMSS (SP 800-208) depending on your toolchain support and CNSA 2.0 applicability. Code signing is prioritized because signed code has long operational lifetimes and a forged signature could compromise systems for years.

- Update SSH key types for infrastructure access. The Open Quantum Safe (OQS) fork of OpenSSH supports ML-DSA key types; native upstream OpenSSH support has not yet landed as of early 2026. Evaluate whether the OQS fork meets your security and operational requirements for production use, or plan for upstream adoption when available.

Phase 2: External and Hybrid Deployments (2028 – 2030)

- Deploy hybrid (composite) certificates on public-facing TLS endpoints as composite certificate standards are finalized and CA/Browser Forum baseline requirements are updated.

- Migrate external-facing code signing certificates (browser extensions, mobile apps, driver signing) to PQC algorithms as platform root stores begin including PQC-signed root certificates.

- Address IoT and embedded device fleets. Devices that can accept firmware updates should receive PQC-capable crypto stacks. Devices that cannot must be placed on hardware replacement schedules aligned with the 2030 deprecation of RSA-2048 and ECC P-256.

- Re-key any data-at-rest encryption that uses RSA key wrapping to PQC key encapsulation (ML-KEM).

Phase 3: Legacy Retirement (2030 – 2035)

- Remove classical-only certificates from production. All remaining RSA and ECC certificates should have been replaced or covered by hybrid certificates in Phase 2.

- Transition hybrid certificates to pure PQC as ecosystem support matures and the backward-compatibility requirement diminishes.

- Decommission classical CA hierarchies. Revoke remaining classical-only roots and remove them from trust stores.

- Validate that no quantum-vulnerable algorithms remain in use, achieving full compliance with NIST IR 8547's 2035 disallowance deadline.

What PQC Migration Actually Costs

For private enterprises, the cost drivers are HSM replacement or firmware upgrades, CA platform licensing or migration, application code changes to support larger keys and signatures, network capacity increases to handle larger TLS handshakes, and labor for inventory, testing, and deployment.

The largest hidden cost is delay. Organizations that begin Phase 0 in 2026 spread the investment across a decade. Those that wait until 2030 face compressed timelines, supply-constrained HSM procurement, and the risk of operating non-compliant systems while scrambling to migrate.

Frequently Asked Questions

When will a cryptographically relevant quantum computer exist?

No consensus exists on timing. Estimates range from "within 10 years" to "decades away." The planning frameworks from NIST and NSA assume it could happen within the migration window, which is why they set 2035 as the hard deadline. The harvest-now-decrypt-later threat exists today regardless of when a CRQC arrives.

Should we skip hybrid certificates and go directly to pure PQC?

For systems where you control both endpoints, yes. Direct migration to pure PQC is simpler, avoids hybrid complexity, and eliminates the need to later remove the classical component. Hybrid strategies are specifically for interoperability with legacy systems you cannot update simultaneously.

Is ML-KEM sufficient or should we wait for HQC?

ML-KEM is standardized, validated, and should be deployed now for key exchange. HQC, selected by NIST in March 2025 as a backup KEM based on code theory rather than lattices, will provide algorithm diversity once standardized (expected 2027). There is no reason to wait — deploy ML-KEM now and add HQC support later for defense-in-depth when the standard is finalized.

Do symmetric algorithms need to change?

AES-256 and SHA-384/SHA-512 are not threatened by known quantum algorithms. Grover's algorithm provides a quadratic speedup against symmetric ciphers, reducing AES-128 to effectively 64-bit security — inadequate for post-quantum use. SHA-256 retains adequate quantum preimage resistance (128-bit via Grover), though NIST IR 8547 recommends SHA-384 or above as a general preference and deprecates 112-bit symmetric security by 2030. The practical advice: use AES-256 and SHA-384 or above.

What about FIPS 140-3 validation for PQC?

NIST's CAVP is accepting algorithm validation submissions for ML-DSA, ML-KEM, and SLH-DSA. Full FIPS 140-3 module validations with PQC are expected to increase through 2026–2027 as vendors complete testing. The current average validation time exceeds 500 days, so vendors not already in the pipeline face significant lead times. If FIPS validation is a hard requirement for your environment, verify your HSM and crypto library vendor's validation timeline before committing to deployment dates.

How does this affect ACME-based certificate automation?

ACME protocol (RFC 8555) is algorithm-agnostic in principle — certificate signing requests can contain any public key type. In practice, ACME clients and servers need explicit support for ML-DSA key types, larger CSR sizes, and PQC signature verification. The concurrent shift to 200-day (March 2026) and eventually 47-day (2029) public SSL/TLS certificate lifespans makes ACME automation mandatory infrastructure — and the same automation pipeline that handles frequent renewals also enables PQC certificate deployment at scale. Check your ACME client and CA for PQC support before assuming automated issuance will work unchanged.

Axelspire provides PKI consultancy and certificate automation for organizations navigating the PQC transition. Contact us to discuss your migration timeline, or use Ask Axel to get immediate answers on ACME protocol, certificate lifecycle, and PQC migration questions.

Related resources

References

- National Institute of Standards and Technology (NIST). (2024). FIPS 203: Module-Lattice-Based Key-Encapsulation Mechanism Standard (ML-KEM).

- NIST. (2024). FIPS 204: Module-Lattice-Based Digital Signature Standard (ML-DSA).

- NIST. (2024). FIPS 205: Stateless Hash-Based Digital Signature Standard (SLH-DSA).

- NIST. (2024). IR 8547: Transition to Post-Quantum Cryptography Standards.

- National Security Agency (NSA). (2022). Commercial National Security Algorithm Suite 2.0 (CNSA 2.0).

- The White House. (2022). National Security Memorandum on Promoting United States Leadership in Quantum Computing While Mitigating Risks to Vulnerable Cryptographic Systems (NSM-10).

- NIST. (2020). SP 800-208: Recommendation for Stateful Hash-Based Signature Schemes.

- Ounsworth, M. et al. (2026). Composite ML-DSA for Use in X.509 PKI and CMS. IETF Internet-Draft.

- NIST. (2025). FIPS 206 (Draft): FN-DSA — FFT over NTRU-Lattice-Based Digital Signature Algorithm.

- NIST. (2025). HQC: Fourth Round Selection for Post-Quantum Key Encapsulation.